How AI Content Detection is Being Weaponized in the Iran War

shirin anlen, Mahsa Alimardani / Mar 17, 2026

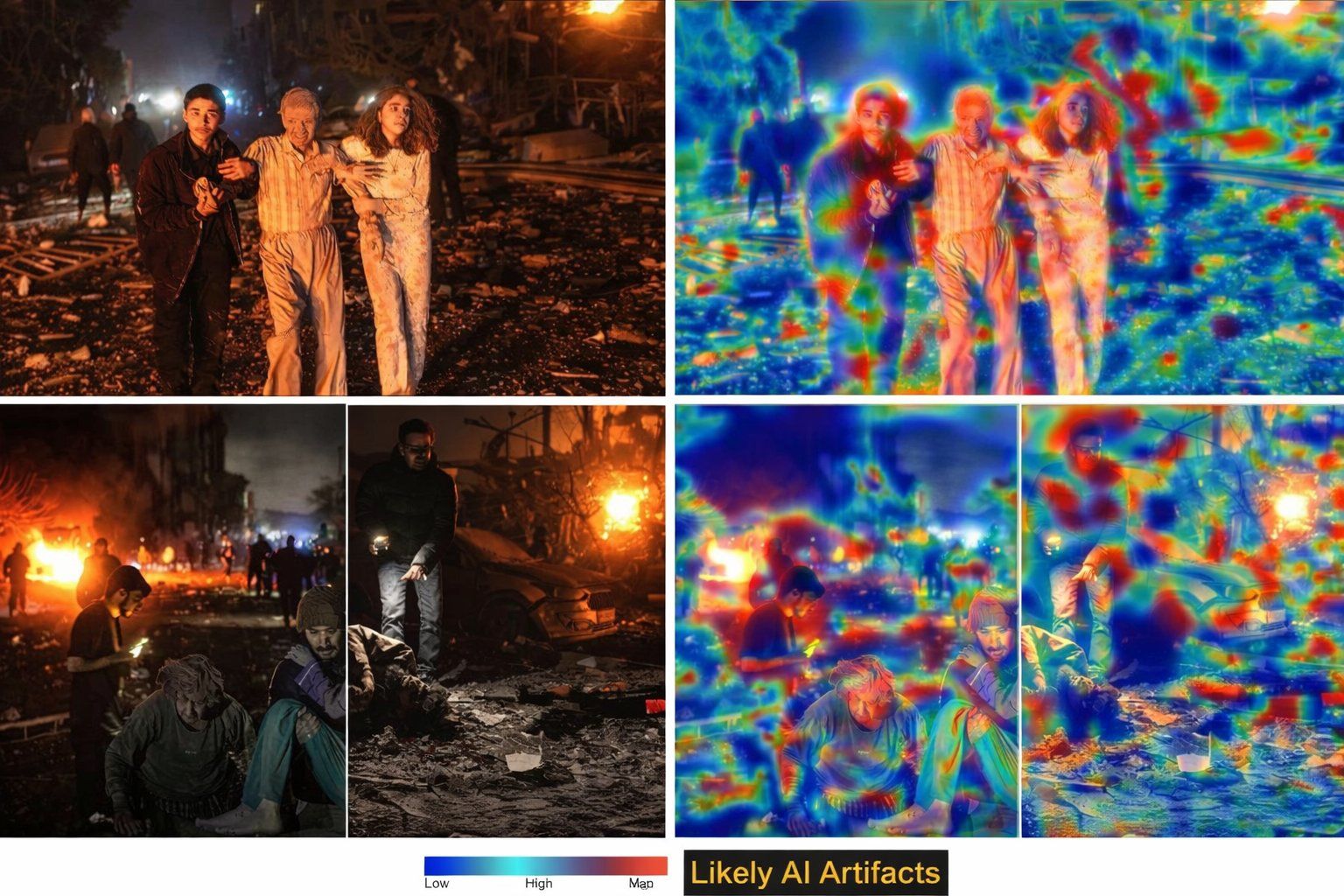

An image purporting to be a heatmap analysis of a photo taken in Iran (described below).

Across social media platforms, AI-generated images, fabricated videos, and repurposed video game footage are spreading at extraordinary speed in the Iran-Israel-US war, which may be remembered as the first conflict where AI overwhelmed the information environment at an unprecedented scale. Synthetic visuals depicting bombings and destruction circulate alongside authentic documentation of real events, making it increasingly difficult to distinguish fact from fiction. Major news organizations, including WIRED, BBC and CNN, have already documented the surge of fake AI war imagery circulating online.

But a more troubling tactic is emerging from this chaos: technical looking analyses are being weaponized to falsely discredit authentic evidence. None of this is surprising. In fact, it was entirely predictable.

Warnings are now reality

For years, researchers and civil society organizations, including ours, have warned that releasing powerful generative AI tools without meaningful safeguards would eventually collide with geopolitical crises. This war appears to be the culmination of that trajectory. During the Israel-Iran 12 day war of June 2025, we documented what was then an unprecedented surge of AI-generated content during an active conflict. AI content in the form of regime propaganda, Israeli military narratives and AI chatbots including Grok delivering false verdicts on contested footage were all operating simultaneously, each amplifying the other.

What we are seeing now is that same information environment under conditions of far greater intensity. Eight months later, generative AI tools produce significantly more realistic outputs and are more accessible to a far wider cast of actors. The massacres of Iranian protestors in January, in which thousands of Iranians were killed by state security forces, have deepened the fractures within Iranian society and sharpened the information battle between the regime, opposition movements, diaspora communities and foreign states. Each of these actors have distinct interests in shaping how the current conflict is understood.

This collision is unfolding in a context that was already among the most hostile to verification anywhere. Decades of state media manipulation and internet censorship have eroded baseline trust in institutional sources. The Iranian state deploys its media infrastructure to document civilian casualties caused by foreign strikes, yet no comparable infrastructure existed to document the thousands of protesters killed by security forces in January 2026. Authentic documentation of real harm can thus be instrumentalised for propaganda purposes, and dismissed as fake for the same reason. The near-total internet shutdown imposed by the authorities since February 28, with connectivity at 1% of ordinary levels, has severed Iranians from real-time information. The communities most affected have also been cut off from participating in the evidentiary record of their own situation.

The result is a volume of content that is overwhelming. BBC Verify journalist Shayan Sardarizadeh has noted that this conflict may have already broken records for the amount of AI-generated content going viral during a war. OSINT researcher Tal Hagin has similarly observed that the problem is no longer limited to ordinary social media users being deceived; the volume has outpaced the verification capacity of even professional newsrooms. In this environment, authentic evidence is not just harder to find, it is actively buried.

The consequences are not abstract. When audiences cannot distinguish authentic from manipulated evidence, atrocities become easier to deny. Genuine documentation of civilian harm can be dismissed as “AI.” And crucial questions about the human cost of war become obscured by an avalanche of spectacle.

But amid this already difficult situation, a new phenomenon is emerging that poses a distinct threat to the verification infrastructure that communities living through conflict depend on. Across the cases we have documented, verification tools and methods are being deployed not to confirm the veracity of media artifacts but to provide the appearance of scientific authority for conclusions already reached. The visualisations may be fabricated, the methodology may be fundamentally flawed, or the tool may simply be fed the answer the user wants validated. In each case the outcome is the same: technical-looking evidence attached to a false claim, circulating faster than any correction can follow. This is the weaponization of AI detection and forensic analysis itself.

The heatmap that wasn’t

In one widely shared case, ‘heatmap’ visualizations were used to discredit authentic images from a March 1 strike in Niloofar Square in eastern Tehran.

The photographs were taken by photojournalist Erfan Kouchari for Iran’s Student News Network (SNN), a state-affiliated outlet, distributed through the Parspix/ABACA wire service, and later published by international outlets, including The Telegraph and The Guardian, as authentic photojournalism from the strike site.

Shortly after the images began circulating online, a user identifying themself as a visual effects artist on X posted what they described as “heatmap overlays” and outputs from Gemini and ChatGPT, claiming the photos were “very likely all AI-generated images.” These visualizations spread quickly and were cited as evidence that the images were fake.

Image 1: Alleged “heatmaps” of Erfan Kouchari’s photos from Niloofar square from March 1, 2026 taken from an X user. Source

But the heatmaps themselves appear to be fabricated.

Heatmaps are a form of data visualization that represent values through color intensity across an image or dataset. In image forensics, heatmaps usually highlight irregularities. However, the examples circulating online did not resemble typical forensic heatmaps.

“This appears to be a very unusual heatmap because it does not localize semantically meaningful artifacts,” said Nikos Sarris, a senior researcher at MeVer, who reviewed the images. In his experience, AI-generated image detectors typically analyze images as a whole rather than isolating patches. Localized artifact maps are more characteristic of older forms of manipulation, such as Photoshop inpainting, rather than generative AI images. “This obviously is not such a case here” he added.

Another expert, Dr. İlke Demir, CEO and founder of Cauth AI, a startup focusing on the origins of content, raised similar concerns. “These heatmaps do not look like real ones, and I believe they are AI-generated,” Demir said. “Just look at the legend — ‘Low / High / Map.’ It doesn’t make sense.” For audiences unfamiliar with how forensic tools actually work, visualisations presented alongside references to Gemini and ChatGPT carried the appearance of rigorous technical analysis.

Kouchari responded to the accusation on Instagram by posting what he labelled the "Original" and "Edited" versions of the disputed images side by side, showing the same three subjects, a young man, an elderly man, and a girl in pajamas, in identical positions. One flat and naturally lit, the other heavily post-processed. Such edits may include filters that embed AI-based enhancement tools, which can confuse automated verification systems and have the potential to alter meaning, but as this apparently false accusation of AI shows, that was not the case here. His caption was notable for its resignation as much as its evidence: "Knowing that even uploading the raw photos and hundreds of other proofs for this group who want to attribute these photos to AI will not work, I still compare them." The post received 615 likes before he deleted it.

Image 2 and 3: State affiliated photo journalist Erfan Kouchari reacted to widespread accusations his images were AI manipulated by sharing the original and edited versions of his photos on March 2, 2026. Kouchari later deleted the post but the authors kept screenshots of his carousel of “original” and “edited” versions of his photos.

What makes this manipulation tactic particularly effective is how easily technical-looking visuals can create an illusion of authority. Even for experienced investigators, it is easy at first glance to assume that a visualization presented alongside references to AI tools must be legitimate.

What the dispute obscured was that independent corroboration already existed. A second photographer, Hamid Vakili, also shooting for Parspix/ABACA, documented the same scene seconds later from a different position, capturing different victims at the same location. Both sets of images are licensed and credited through Alamy. In other words, the verification infrastructure was functioning: two photographers, same scene, same wire service, accurate albeit state supported documentation. But in an information environment already primed to distrust state-affiliated sources, the heatmaps did not need to be convincing. They needed only to confirm what audiences already suspected.

The illusion of technical authority

In a second case circulated online, real forensic tools were apparently applied so fundamentally incorrectly that the results were indistinguishable from fabrication.

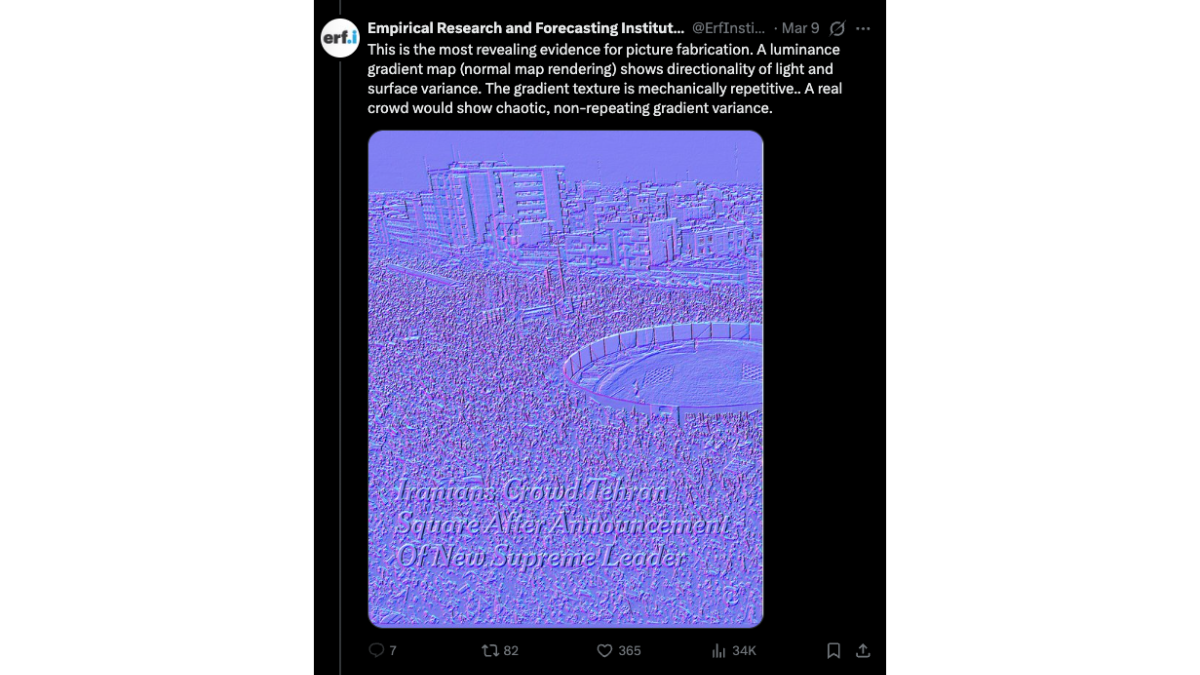

A photograph published by The New York Times on March 9, 2026, documenting crowds gathering in Tehran following the announcement of Mojtaba Khamenei as Iran's new Supreme Leader, became the target of a coordinated discrediting effort. That same day, the X account of the "Empirical Research and Forecasting Institute" (ERFI), which describes itself as co-founded by a PhD scientist based in Australia, posted a thread claiming the image "shows signs of digital manipulation" and was "manufactured" and "fabricated." As evidence, it shared screenshots of forensic analyses including Error Level Analysis (ELA). The thread attracted over 600,000 views on X, with screenshots of the purported forensic analysis spreading further across Instagram and other platforms widely used by Iranian diaspora communities.

Image 4: ERFI shared this viral rendering of a ‘Error Level Analysis’ to claim a New York Times photo from Tehran was AI generated.

The same account also published a “normal map” render of the same screenshot, presenting it as decisive evidence of fabrication. In computer graphics, a normal map is used in 3D rendering to simulate how light interacts with surface textures; when applied to a flat photograph, it simply converts pixel gradients into colored patterns and is not a forensic tool capable of detecting AI generated artifacts. In this context, the render functions as what journalist Craig Silverman calls “forensic cosplay,” a technical-looking visualization that suggests hidden analysis while actually serving manufactured authority for a predetermined claim.

Image 5: ERFI’s “normal map rendering”

The problem was fundamental. As Silverman documented in his Indicator newsletter, ERFI had not run its analysis on the original image but on a screenshot of an Instagram post, including the surrounding platform interface. As Demir explains, screenshots produce compression artifacts that have no bearing on whether an original image is authentic or synthetic. "It seems anyone with an edge detector or map visualization on images becomes an expert nowadays," Demir said.

The New York Times issued a public response noting the analysis "misrepresents standard image compression behavior." But within Iranian diaspora communities, where the screenshots had spread most widely, the conclusion that the image was AI-generated had already taken hold. This 'forensic cosplay' had reached a vastly larger audience than any correction would.

This is the evolution of a familiar manipulation tactic. For years, actors seeking to undermine authentic content have simply labeled it “fake,” and more recently claimed false positives from unreliable detection tools. But the new tactic is more sophisticated: fabricating technical-looking forensic evidence to support those claims. It is the difference between saying “this image is AI” and presenting what appears to be scientific proof. The tactic works particularly well because most people are unfamiliar with how forensic tools actually work.

The result is a dangerous feedback loop: synthetic media undermines trust in real evidence, and fabricated forensic analysis further erodes confidence in verification itself. In this environment, the tools designed to detect manipulation become instruments of manipulation, deployed to deepen doubt and confusion about real events and even real casualties.

This crisis is not simply the result of malicious actors operating in an extremely fragile information ecosystem. It is also the direct consequence of deploying extremely powerful generative technologies without adequate safeguards, alongside a verification infrastructure that was never designed to withstand the speed at which false authority now spreads.

Some trust signals already exist that could help. Content credentials, for instance, could allow provenance information to travel with an image across every platform where it circulates. Adoption remains limited, but even basic content-credential requirements at the photo-agency level could establish an additional layer of trust.

The cases we have documented here are not edge cases. They are a preview of a verification environment in which corrections travel more slowly and less widely than the original false claims, and where authentication tools exist but are not embedded in the spaces where disputes actually happen.

When trust in evidence collapses, the greatest casualty is not only truth online. It is accountability for real-world harm.

Authors