EU Moves to Regulate AI Nudification, But Key Challenges Remain

Marie Seck, Magdalena Maier / Mar 31, 2026

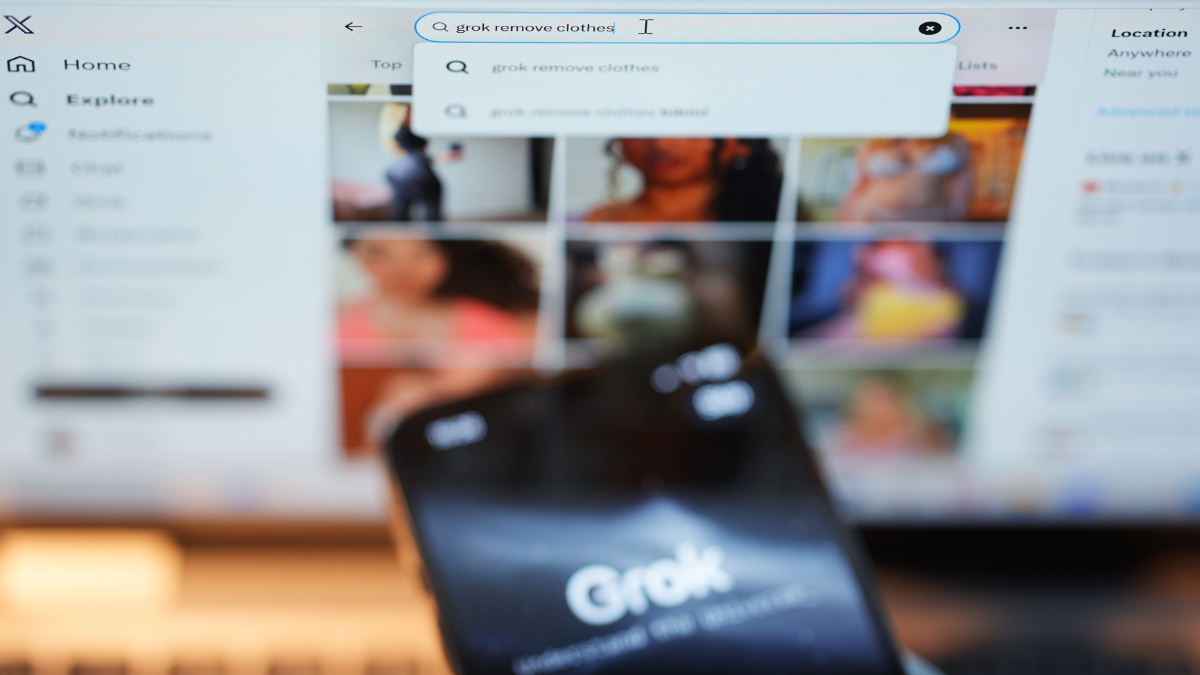

The Grok app on an iPhone pictured against the backdrop of search results displayed on the social media platform X on a laptop. Thursday January 8, 2026.(Press Association via AP Images)

The recent Grok scandal saw an avalanche of non-consensual sexualized deepfakes of women and girls created and shared directly on X, following the rollout of Grok’s picture-editing capabilities in late December 2025.

This provided a crucial opportunity to test the effectiveness of the existing EU legislative framework to prevent and address non-consensual intimate imagery (NCII) and child sexual abuse material (CSAM). Investigations into X were opened almost immediately under both the Digital Services Act (DSA) and the General Data Protection Regulation (GDPR). At the same time, calls for additional safeguards and protections under EU law echoed across the Union, leading to the European Parliament and the European Council remarkably coming together to introduce a ban on such practices under the AI Act and pushing for additional restrictions against a rising tide of deregulation.

In a recent brief, the Centre for Democracy and Technology Europe explored the merits and gaps of existing legislation to address this issue. Building on that analysis, we reflect on the existing safeguards, the opportunities, and the challenges that a new ban under the AI Act would need to overcome.

The DSA: a key tool, but not a magic wand

The Digital Services Act is the primary legislation to regulate online platforms in the EU and thus, a possible tool to address nonconsensual deepfakes when — and only when — they are shared on online platforms. The approach embedded within the DSA includes both pre-emptive and reactive mechanisms that allow for the mitigation of the proliferation of CSAM and NCII, be it AI-enabled or not.

While the DSA does not include general monitoring obligations, the first key tool lies within the obligation for all online platforms, independently of size, to diligently remove illegal content when they become aware of it. CSAM has long been established as illegal across the Union. While the move towards criminalization of NCII is more recent, this type of imagery is already illegal in many EU member states.

Crucially, 2027 will mark the entry into force of the EU Directive on Combating Violence against Women, which will ensure that several types of technology-facilitated gender-based violence (TFGBV) are uniformly criminalized across the Union. This explicitly includes "producing, manipulating or altering and subsequently making accessible to the public [...] material depicting sexually explicit activities or the intimate parts of a person.”

Other relevant DSA provisions include the obligation for Very Large Online Platforms and Search Engines (VLOP/SEs) to assess and mitigate systemic risk stemming from their platforms. This obligation embeds measures to reduce harms at a broader scale, as it is not necessarily tied to individual pieces of content but rather to the overall risk level arising from the platform’s use or design. In yearly risk assessment reports, VLOPs must communicate to the European Commission and to the public how they have assessed and what measures they have used to mitigate a set of systemic risks.

We have argued in the past that TGFBV is an inherently cross-cutting risk relevant to illegal content, fundamental rights as well as civic discourse. However, risks of gender-based violence are also explicitly mentioned as systemic risks in the DSA. While these reports are published yearly, the VLOP/SEs also have a duty to conduct ad-hoc risk assessments ahead of the roll-out of new features within their interface, as the design of the platform is central to consider in risk assessments. When a VLOP rolls out a new AI-enabled picture editing feature, like it was the case for X last December, a comprehensive risk assessment foreseeing and preventing an outpour of NCII should be conducted. And when such an outpour occurs, it is more than likely that a good-faith risk assessment was not conducted, in clear violation of the DSA.

There are two things to consider when examining the tools the DSA allows for in combating AI-enabled NCII. First, the robustness of the DSA depends in large part on the strength and speed of its enforcement, including on provisions that are less straightforward than others, like the risk assessment provisions. In the X case, the EU Commission has announced it started an investigation in January —this investigation needs to yield results in order for the DSA to fulfill its potential.

Second, due to its scope, the DSA can only compel an online platform or search engine to offer and manage its services in accordance with the law’s requirements. As a result, the DSA might be effective in cases involving the integration of AI tools within covered services, as was the case for Grok — where we have seen the EU Commission take action accordingly — but will not be relevant in regulating nudification apps or similar tools that may fall outside of the scope of the DSA. The scope of other EU legislation, such as the AI Act, may, to some extent, be complementary to the DSA.

How a ban under the AI Act could complement existing protections

Short of the obligation to label deepfakes as defined under the AI Act — which neither effectively contributes to the prevention of the generation of NCII and CSAM nor allows redress for affected individuals — the Act imposes no hard limitations regarding the creation or dissemination of NCII and CSAM. Under the GPAI Code of Practice, the occurrence of NCII and CSAM is, however, a relevant risk to be considered for providers of general-purpose AI models with systemic risk. Nevertheless, among all the risks considered, providers need only identify certain risks for assessment and mitigation, leaving it to their discretion whether to include risks of NCII and CSAM. As we have previously argued, a situation where the provider is solely responsible for assessing whether a risk is acceptable and free to choose not to undertake mitigations is far from desirable.

In short, safeguards under the AI Act are few, largely relying on the discretion of providers and the ability and willingness of enforcement authorities to scrutinize compliance. Crucially, these safeguards are limited to image-generation models or systems based on models that qualify as GPAI with systemic risk. Taken together with the DSA’s limited scope, the insufficiency of existing AI Act protections bolstered proposals to introduce a new ban addressing this issue under the AI Act. Both the European Parliament and the Council put forward amendments to add the AI-facilitated generation of NCII to the list of prohibited practices under the AI Act.

For such a ban to fulfill its intended purpose, it must overcome several hurdles. For example, no mitigation method or technical safeguard can fully prevent the generation of NCII and CSAM. This is recognized in the respective proposals of the Council and the Parliament, which only prohibit AI systems where providers have not put effective safeguards in place to prevent the generation of such images. This is a positive first step towards ensuring the ban is workable in practice, yet the proposals overall lack detail on what measures would be considered sufficient.

Given that the “effectiveness” of safeguards may be interpreted differently by providers, it is vital to achieve some level of clarity on when this threshold would be met. Lawmakers should therefore embed requirements for detailed explanations on how the safeguards work, and their projected effectiveness, in a manner that allows public interest technologists to test and verify these claims.

As only non-consensual intimate imagery would be prohibited under both proposals, this requires some sort of consent verification. In addition to questions on effectiveness and feasibility, this might come with risks related to privacy, with a particular effect on already vulnerable communities, such as sex workers. Any obligation in this context must be thoughtfully crafted to limit the dangers that often accompany identity verification, take into account the perspective of affected individuals, and ensure robust safeguards are in place.

Finally, a strong ban necessarily requires a balancing exercise between the types of content generation that must be rightly outlawed and those that are broadly considered desirable. In practice, a provider would need to find effective measures that could walk the fine line between preventing prohibited outputs while allowing outputs excluded from the ban’s scope. As a consequence, co-legislators must be realistic about the potential trade-offs the restriction of technical capabilities could have for the lawful creation of such content.

In any event, the proposed bans as currently scoped would only cover imagery realistically showing the intimate parts of individuals or a natural person engaged in sexually explicit activities. In practice, many of the images generated by Grok on X — for example, showing women in bikinis — would therefore not necessarily fall under such a ban, while still having the potential to cause great harm to affected individuals. This shows the limits of any legal solution and the need for a broader and comprehensive approach to discourage people from creating this content in the first place.

Closing enforcement gaps in the DSA and AI Act

The existing EU legal frameworks present a patchwork landscape of potential avenues that may have a limited impact in tackling the urgent issues raised by the Grok scandal, particularly for victims seeking redress. However, ensuring that providers of GPAI models with systemic risk comply with their obligations and that large online platforms engage in good-faith risk assessment and mitigation processes under the DSA could be a strong improvement.

The proposed prohibition of AI systems generating NCII under the AI Act marks an important step in filling the gaps left under current legislation. With trilogue negotiations under the AI omnibus underway, co-legislators should ensure that any remaining ambiguities and trade-offs under the current proposals are resolved. This will ensure that the ban is both robust and effective.

Authors