Ten Legal and Business Risks of Chatbots and Generative AI

Matthew Ferraro, Natalie Li, Haixia Lin, Louis Tompros / Feb 28, 2023Matthew F. Ferraro, a senior fellow at the National Security Institute at George Mason University, is a Counsel at WilmerHale; Natalie Li is a Senior Associate, and Haixia Lin and Louis W. Tompros are Partners at WilmerHale.

Introduction

It took just two months from its introduction in November 2022 for the artificial intelligence (AI)- powered chatbot ChatGPT to reach 100 million monthly active users—the fastest growth of a consumer application in history.

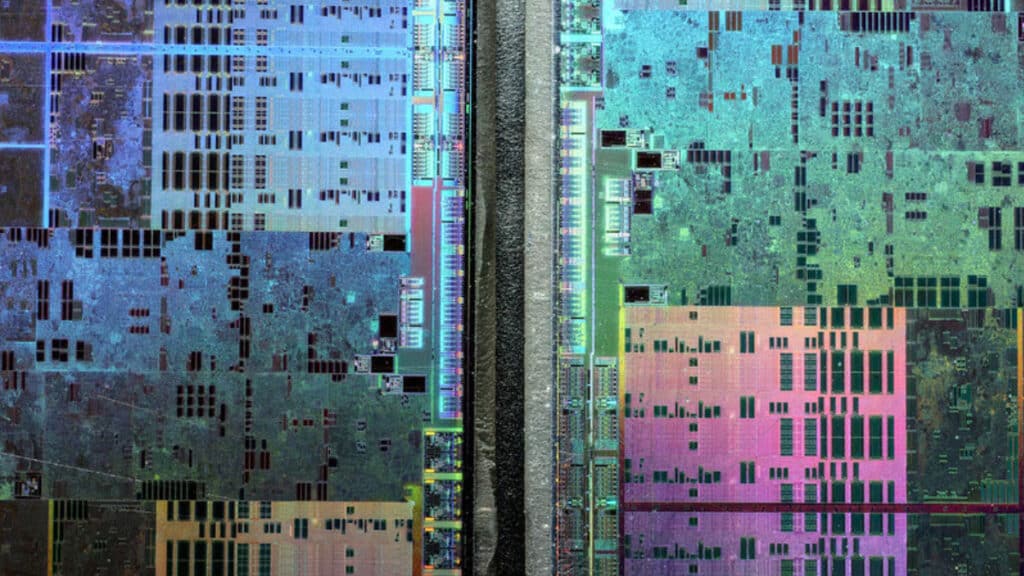

Chatbots like ChatGPT are Large Language Models (LLMs), a type of artificial intelligence known as “generative AI.” Generative AI refers to algorithms that, after training on massive amounts of input data, can create new outputs, be they text, audio, images or video. The same technology fuels applications like Midjourney and DALL-E 2 that produce synthetic digital imagery, including “deepfakes.”

Powered by the language model Generative Pretrained Transformer 3 (GPT-3), ChatGPT is one of today’s largest and most powerful LLMs. It was developed by San Francisco-based startup OpenAI—the brains behind DALL-E 2—with backing from Microsoft and other investors, and was trained on over 45 terabytes of text from multiple sources including Wikipedia, raw webpage data and books to produce human-like responses to natural language inputs.

LLMs like ChatGPT interact with users in a conversational manner, allowing the chatbot to answer follow-up questions, admit mistakes, and challenge premises and queries. Chatbots can write and improve code, summarize text, compose emails and engage in protracted colloquies with humans. The results can be eerie; in extended conversations in February 2023 with journalists, chatbots grew lovelorn and irascible and expressed dark fantasies of hacking computers and spreading misinformation.

The promise of these applications has spurred an “arms race” of investment into chatbots and other forms of generative AI. Microsoft recently announced a new, $10 billion investment in OpenAI, and Google announced plans to launch an AI-powered chatbot called Bard later this year.

The technology is advancing at a breakneck speed. As Axios put it, “The tech industry isn’t letting fears about unintended consequences slow the rush to deploy a new technology.” That approach is good for innovation, but it poses its own challenges. As generative AI advances, companies will face a number of legal and ethical risks, both from malicious actors leveraging this technology to harm businesses and when businesses themselves wish to implement chatbots or other forms of AI into their functions.

This is a quickly developing area, and new legal and business dangers—and opportunities—will arise as the technology advances and use cases emerge. Government, business and society can take the early learnings from the explosive popularity of generative AI to develop guardrails to protect against their worst behavior and use cases before this technology pervades all facets of commerce. To that end, businesses should be aware of the following top 10 risks and how to address them.

Risks

1. Contract Risks

Using chatbots or similar AI tools may implicate a range of contractual considerations.

Businesses should be wary of entering into chatbot prompts information from clients, customers or partners that is subject to contractual confidentiality limitations or other controls. This is because chatbots may not keep that information private; their terms of service typically grant the chatbot the rights to use the data they ingest to develop and improve their services. If the bot provides opt-out features, users may want to utilize them before inputting contractually protected information into the prompts, but users should still proceed cautiously. In one exchange with a professor, ChatGPT itself warned that “[i]nformation provided to me [ChatGPT] during an interaction should be considered public, not private” and that the bot cannot “ensure the security or confidentiality of any information exchanged during these interactions, and the conversations may be stored and used for research or training purposes.”

Likewise, a business will need to curtail its use of chatbots or AI generally if a contract imposes on the business the obligation to produce work or perform services on its own or by a specific employee, without the aid of AI. To the extent that a chatbot generates contract work product— unlike traditional information technology, which merely provides a platform for generating work product—a chatbot service could be a subcontractor, potentially subject to pre-approval by the ultimate customer.

In both circumstances, the key is to recognize that the relationship between a user and a chatbot is not akin to the relationship between a user and a word processing program or a similar static tool. Chatbots and other generative AI software are learning machines that by default use information entered into them for their own purposes and that produce their own output. (Beware: all of the inputs could potentially be discoverable in litigation.) For these reasons, they pose risks to businesses’ contractual obligations, and companies should use these tools circumspectly.

2. Cybersecurity Risks

Chatbots pose cybersecurity risks to businesses along two main axes. First, malicious users without sophisticated programming skills can use chatbots to create malware for cyber hacks. Second, because chatbots can convincingly impersonate fluent, conversational English, they can be used to create human-like conversations that can be used for social engineering, phishing and malicious advertising schemes, including by bad actors with poor English-language skills. Chatbots like ChatGPT typically disallow malicious uses through their usage policies and implement system rules to prohibit bots from responding to queries that ask for the creation of malicious code per se; however, cybersecurity researchers have found work-arounds that threat actors on the dark web and special-access sources have already exploited. In response, companies should redouble efforts to bolster their cybersecurity and train employees to be on the lookout for phishing and social engineering scams.

3. Data Privacy Risks

Chatbots may collect personal information as a matter of course. For example, ChatGPT’s Privacy Policy states that it collects a user’s IP address, browser type and settings; data on the user’s interactions with the site; and the user’s browsing activities over time and across websites, all of which it may share “with third parties.” If a user does not provide such personal information, it may render a chatbot’s services inoperable. Currently, the leading chatbots do not appear to provide the option for users to delete the personal information gathered by their AI models.

Because laws in the United States and Europe impose restrictions on the sharing of certain personal information about, or obtained from, data subjects—some of which chatbots may collect automatically, and some that a user may input into the chatbot’s prompt—businesses using chatbots or integrating them into their products should proceed cautiously. Data privacy regulators could scrutinize these systems, assessing whether their user-consent options and opt-out controls stand up to legal scrutiny. For example, the California Privacy Rights Act requires California companies of a certain size to provide notice to individuals and the ability to opt out of the collection of some personal information.

Some data privacy regimes impose regulations on entities that merely collect information, like the AI systems that ingested billions of Internet posts to create their models. In California, for example, unless an entity is registered as a data broker, it is supposed to provide a “notice at collection” to any California resident about whom it is collecting data.

To mitigate data privacy risks, companies utilizing chatbots and generative AI tools should review their privacy policies and disclosures, comply with applicable data protection laws with regard to processing personal information, and provide opt-out and deletion options.

4. Deceptive Trade Practice Risks

If an employee outsources work to a chatbot or AI software when a consumer believes he or she is dealing with a human, or if an AI-generated product is marketed as human made, these misrepresentations may run afoul of federal and state laws prohibiting unfair and deceptive trade practices. The Federal Trade Commission (FTC) has released guidance stating that Section 5 of the FTC Act, which prohibits “unfair and deceptive” practices, gives it jurisdiction over the use of data and algorithms to make decisions about consumers and over chatbots that impersonate humans.

For example, in 2016, the FTC alleged that an adultery-oriented dating website deceived consumers by using fake “engager profiles” to trick customers to sign up, and in 2019, the FTC alleged that a defendant sold phony followers, subscribers and likes to customers to boost social media profiles. In sum, “[i]f a company’s use of doppelgängers—whether a fake dating profile, phony follower, deepfakes, or an AI chatbot—misleads consumers, that company could face an FTC enforcement action,” or enforcement by state consumer protection authorities.

To address this issue, the FTC emphasizes transparency. “[W]hen using AI tools to interact with customers (think chatbots), be careful not to mislead consumers about the nature of the interaction,” the FTC warns. Companies should also be transparent when collecting sensitive data to feed into an algorithm to power an AI tool, explain how an AI’s decisions impact a consumer and ensure that decisions are fair.

Likewise, the White House’s October 2022 Blueprint for an AI Bill of Rightssuggests developers of AI tools provide “clear descriptions of the overall system functioning and the role automation plays, notice that such systems are in use, the individual or organization responsible for the system, and explanations of outcomes that are clear, timely, and accessible.”

5. Discrimination Risks

Issues related to discrimination can arise in different ways when businesses use AI systems. First, bias can result because of the biased nature of the data on which AI tools are trained. Because AI models are built by humans and learn by devouring data created by humans, human bias can be baked into an AI’s design, development, implementation and use. For example, in 2018, Amazon reportedly scrapped an AI-based recruitment program after the company found that the algorithm was biased against women. The model was programmed to vet candidates by observing patterns in resumes submitted to the company over a 10-year period, but because the majority of the candidates in the training set had been men, the AI taught itself that male candidates were preferred over female candidates.

ChatGPT, like other LLMs, can learn to express the biases of the data used to train them. As OpenAI acknowledges, ChatGPT “may occasionally produce harmful instructions or biased content.”

Second, users can purposefully manipulate AI systems and chatbots to produce unflattering or prejudiced outputs. For example, despite built-in features to inhibit such responses, one user got ChatGPT to write code stating that only White or Asian men make good scientists; OpenAI has reportedly updated the bot to respond, “It is not appropriate to use a person’s race or gender as a determinant of whether they would be a good scientist.” In another recent example, at a human’s direction, the chatbot adopted a “devil-may-care alter ego” that opined that Hitler was “complex and multifaceted” and “a product of his time.”

Federal regulators and the White House have repeatedly emphasized the importance of using AI responsibly and in a nondiscriminatory manner. For example, the White House’s Blueprint for an AI Bill of Rights declares that users “should not face discrimination by algorithms and systems should be used and designed in an equitable way.” Algorithmic discrimination, which has long existed independent of chatbots, refers to when automated systems “contribute to unjustified different treatment or impacts disfavoring people” based on various protected characteristics like race, sex, and religion.

“Designers, developers, and deployers of automated systems should take proactive and continuous measures to protect individuals and communities from algorithmic discrimination and to use and design systems in an equitable way,” the White House advises. This protection should include “proactive equity assessments as part of the system design,” the use of representative data, “predeployment and ongoing disparity testing and mitigation, and clear organizational oversight,” among other actions.

Similarly, in April 2021, the FTC noted that even “neutral” AI technology can “produce troubling outcomes—including discrimination by race or other legally protected classes.” The FTC recommends that companies’ use of AI tools be transparent, explainable, and fair and empirically sound so as not to mislead consumers about the nature of their interactions with the company.

Finally, in January 2023, the National Institute of Standards and Technology (NIST) issued a Risk Management Framework for using AI in a trustworthy manner. The Risk Management Framework provides voluntary guidance to users of AI and sets forth principles for managing risks related to fairness and bias, as well as other principles of responsible AI such as validity and reliability, safety, security and resiliency, explainability and interpretability, and privacy.

Bias may arise in AI systems even absent prejudicial or discriminatory intent by their human creators. As urged by emerging US government guidance, companies using such tools should carefully consider the potential for prejudicial or discriminatory impact, be forthright about how they are using chatbots and other generative AI tools, conduct regular testing to judge disparities, and impose a process for humans to review the outputs to ensure compliance with anti-discrimination laws and to safeguard against reputational harm.

6. Disinformation Risks

Chatbots can help malicious actors create false, authoritative-sounding information at mass scale quickly and at little cost. Researchers showed recently that chatbots can compose news articles, essays and scripts that spread conspiracy theories, “smoothing out human errors like poor syntax and mistranslations and advancing beyond easily discoverable copy-paste jobs.”

False narratives coursing through the internet already regularly harm businesses. For example, in 2020, the QAnon-inspired theory spread online that the furniture seller Wayfair was connected with child sex trafficking because of the coincidental overlap of the names of some of its furniture pieces and those of missing children. As a result, social media users attempted to orchestrate a large short sale of Wayfair’s stock, posted the address and images of the company’s headquarters and the profiles of employees, and harassed the CEO.

Now, a single bad actor with access to an effective chatbot could generate a flood of human looking posts like those that targeted Wayfair and loose them on the internet, potentially harming the reputation and valuation of innocent companies. Add to these false narratives deepfake imagery of, say, the CEO of the targeted business doing something untoward, and the dangers will accelerate.

What is more, malicious actors can teach AI models bogus information by feeding lies into their models, which the models will then spread.

Managing disinformation risk is complex. In short, businesses should plan for disinformation dangers like they plan for cyberattacks or crisis events, proactively communicate their messages, monitor how their brands are perceived online, and be prepared to respond in the event of an incident.

7. Ethical Risks

Companies regulated by professional ethics organizations, such as lawyers, doctors and accountants, should ensure that their use of AI comports with their professional obligations.

For example, in the legal services industry, “legal representation” is explicitly defined in several jurisdictions as a service rendered by a person. Because AI chatbots are not “persons” admitted to the bar, they cannot practice law before a court. Accordingly, the use of AI in the legal industry could elicit charges of the unauthorized practice of law. Case in point: In January 2023, Joshua Browder, the CEO of the AI company DoNotPay, attempted to deploy an AI chatbot to argue before a physical courtroom. But after “state bar prosecutors” purportedly threatened legal action and six months’ jail time for the unauthorized practice of law, Browder canceled the appearance. To avoid potential violations of ethical obligations, companies should ensure any use of AI tools comports with ethical and applicable professional codes.

8. Government Contract Risks

The US government is the largest purchaser of supplies and services in the world. US government contracts are typically awarded pursuant to formal competitive procedures, and the resulting contracts generally incorporate extensive standardized contract terms and compliance requirements, which frequently deviate from practices in commercial contracting. These procedural rules and contract requirements will govern how private companies might use AI to prepare bids and proposals seeking government contracts and to perform those contracts that are awarded.

When preparing a bid or proposal in pursuit of a government contract, companies should be transparent about any intended or potential use of AI to avoid the risk of misleading the government that the work product will be generated in whole or in part by a third party’s AI tool. If two competing bidders use the same AI tool to develop their proposals, there is a chance that the proposals will appear similar. Indeed, OpenAI’s Terms of Use warn that “[d]ue to the nature of machine learning, Output [from ChatGPT] may not be unique across users and [the chatbot] may generate the same or similar output for OpenAI or a third party.” Such similarity could create an appearance of sharing of contractor bid or proposal information, which is prohibited by the Procurement Integrity Act. If competitive proposal information is entered into a third-party AI tool, that information might actually be used by the tool through a machine learning process to generate another offeror’s proposal, which could actually constitute a prohibited sharing of contractor bid or proposal information.

For awarded government contracts, a contractor should review the contract before using AI to create deliverables to ensure that the contract does not prohibit the use of such tools to generate work product.

Thus, government contractors should proceed cautiously and in consultation with counsel before relying on chatbots or generative AI to pursue or perform government contracts.

9. Intellectual Property Risks

Intellectual property (IP) risks associated with using AI can arise in several ways.

First, because AI systems have been trained on enormous amounts of data, such training data will likely include third-party IP, such as patents, trademarks, or copyrights, for which use authorization has not been obtained in advance. Hence, outputs from the AI systems may infringe others’ IP rights. This phenomenon has already led to litigation.

In November 2022, in Doe v. GitHub, pseudonymous software engineers filed a putative class action lawsuit against GitHub, Microsoft and OpenAI entities alleging that the defendants trained two generative AI tools—GitHub Copilot and OpenAI Codex—on copied copyrighted material and licensed code. Plaintiffs claim that these actions violate open source licenses and infringe IP rights. This litigation is considered the first putative class action case challenging the training and output of AI systems.

In January 2023, in Anderson v. Stability AI, three artists filed a putative class action lawsuit against AI companies Stability AI, Midjourney and DeviantArt for copyright infringement over the unauthorized use of copyrighted images to train AI tools. The complaint describes AI image generators as “21st-century collage tools” that have used plaintiffs’ artworks without consent or compensation to build the training sets that inform AI algorithms.

In February 2023, Getty Images filed a lawsuit against the Stability AI, accusing it of infringing its copyrights by misusing millions of Getty photos to train its AI art-generation tool.

Second, disputes may arise over who owns the IP generated by an AI system, particularly if multiple parties contribute to its developments. For example, OpenAI’s Terms of Use assign the “right, title and interest” in the output of the LLM to the user who provided the prompts, so long as the user abided by OpenAI’s terms and the law. OpenAI reserves the right to use both the user’s input and the AI-generated output “to provide and maintain the Services, comply with applicable law, and enforce our policies.”

Third, there is the issue of whether IP generated by AI is even protected because, in some instances, there is arguably no human “author” or “inventor.” Litigants are already contesting the applicability of existing IP laws to these new technologies. For example, in June 2022, Stephen Thaler, a software engineer and the CEO of Imagination Engines, Inc., filed a lawsuit asking the courts to overturn the US Copyright Office’s decision to deny a copyright for artwork whose author was listed as “Creativity Machine,” an AI software Thaler owns. (The US Copyright Office has stated that works autonomously generated by AI technology do not receive copyright protection because the Copyright Act grants protectable copyrights only to works created by a human author with a minimal degree of creativity.) In late February 2023, the US Copyright Office ruled that images used in a book that were created by the image-generator Midjourney in response to a human’s text prompts were not copyrightable because they are “not the product of human authorship.”

As the law surrounding the use of AI develops, companies seeking to use LLMs and generative AI tools to develop their products should document the extent of such use and work with IP counsel to ensure adequate IP protections for their products. For example, the Digital Millennium Copyright Act requires social media companies to remove posts that infringe on IP, and generative AI systems may have avenues through which rights holders can alert the platforms to infringing uses. For example, OpenAI provides an email address where rights holders can send copyright complaints, and OpenAI says “it may delete or disable content alleged to be infringing and may terminate accounts of repeat infringers.”

10. Validation Risks

As impressive as chatbots are, they can make false, although authoritative-sounding statements, often referred to as “hallucinations.” LLMs are not sentient and do not “know” the facts. Rather, they know only the most likely response to a prompt based on the data on which they were trained. OpenAI itself acknowledges that ChatGPT may “occasionally produce incorrect answers” and cautions that ChatGPT has “limited knowledge of world and events after 2021.” Users have flagged and cataloged responses in which ChatGPT flubbed answers to mathematical problems, historical queries and logic puzzles.

Companies seeking to use chatbots should not simply accept the AI-generated information as true and should take measures to validate the responses before incorporating them into any work product, action or business decision.

Conclusion

With the pell-mell development of chatbots and generative AI, businesses will encounter both the potential for substantial benefits and the evolving risks associated with the use of these technologies. While specific facts and circumstances will determine particular counsel, businesses should consider these top-line suggestions:

- Be circumspect in the adoption of chatbots and generative AI, especially in pursuing government contracts, or to generate work required by government or commercial contracts;

- Consider adopting policies governing how such technologies will be deployed in business products and utilized by employees;

- Recognize that chatbots can often err, and instruct employees not to rely on them uncritically;

- Carefully monitor the submission of business, client or customer data into chatbots and similar AI tools to ensure such use comports with contractual obligations and data privacy rules;

- If using generative AI tools, review privacy policies and disclosures, require consent from users before allowing them to enter personal information into prompts, and provide opt-out and deletion options;

- If using AI tools, be transparent about it with customers, employees and clients;

- If using AI software or chatbots provided by a third party, seek contractual indemnification from the third party for harms that may arise from that tool’s use;

- Bolster cybersecurity and social engineering defenses against AI-enabled threats;

- Review AI outputs for prejudicial or discriminatory impacts;

- Develop plans to counter AI-powered disinformation;

- Ensure that AI use comports with ethical and applicable professional standards; and

- Copyright original works and patent critical technologies to strengthen protection against unauthorized sourcing by AI models and, if deploying AI tools, work with IP counsel to ensure outputs are fair use.

- - -

We thank Partners Barry Hurewitz and Kirk Nahra, Counsel Rebecca Lee, and Senior Associate Ali Jessani for their contributions to this article.

Authors