It’s Too Soon To Tell If the TAKE IT DOWN ACT Is Working

Alejandro Cuevas / May 13, 2026Alejandro Cuevas is a postdoctoral fellow at Princeton’s Center for Information Technology Policy.

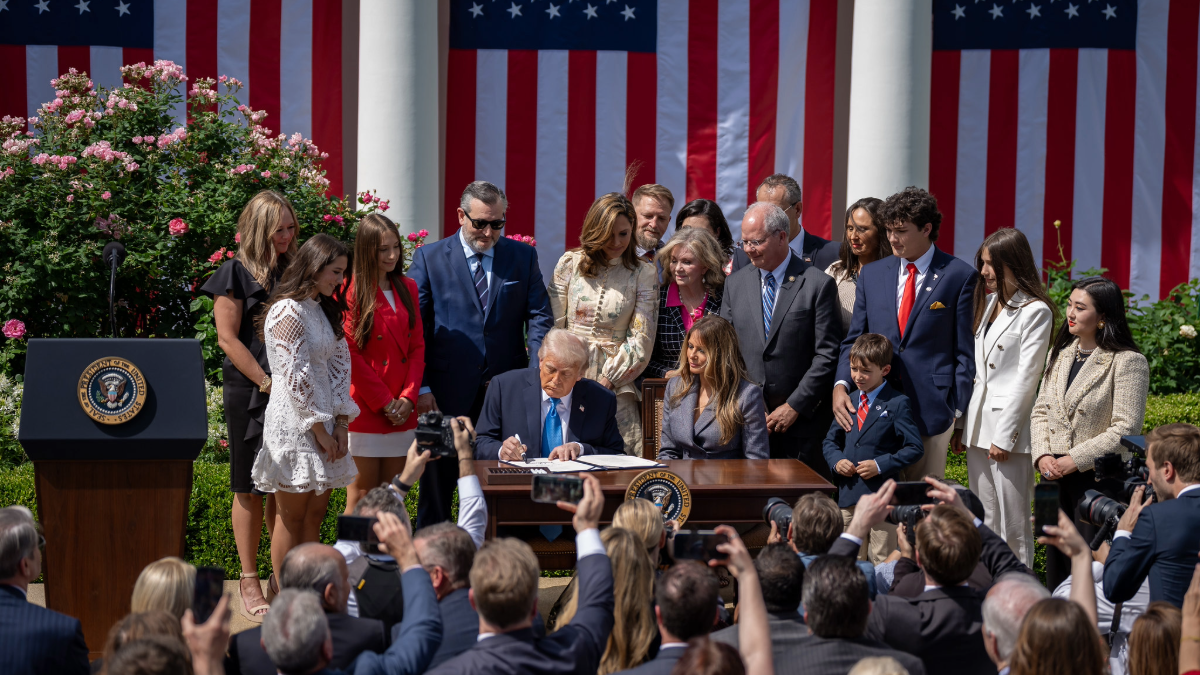

President Donald Trump and First Lady Melania Trump participate in a bill signing ceremony with lawmakers and advocates for the Take it Down Act, Monday, May 19, 2025, in the White House Rose Garden.(Official White House photo by Carlos Fyfe)

A year ago—on May 19, 2025—United States President Donald Trump signed the TAKE IT DOWN Act into law. The landmark bill made the publication of non-consensual intimate images a federal crime, artificial or not. Now, days before the one year anniversary of its passage, Federal Trade Commission (FTC) chairman Andrew Ferguson is “reminding businesses of their obligation to comply” and the “penalties for non-compliance.”

But has the new law changed the risk calculation for the creation and distribution of NCII? Our research paints a bleak outlook—and that policing offenders will be a difficult challenge. So far, the evidence suggests that, despite federal-level criminalization, the supply and demand for AI-generated NCII only grew in 2025. Whether platform accountability proves more effective than criminal deterrence is now at test.

A many-headed hydra

Just after the House of Representatives passed the TAKE IT DOWN ACT and only two weeks before Trump signed the bill, the infamous MrDeepfakes website announced it was shutting down. The demise of MrDeepfakes, which 404 Media called the “go-to site for nonconsensual deepfake porn,” was hailed by experts who regarded it as a scourge. The note left on the website claimed critical infrastructure operators had rescinded their services. Media reports remarked on the proximity of these events––at first glance, the criminalization of sexually explicit non-consensual explicit imagery, colloquially referred to as “deepfake pornography,” indicated the TAKE IT DOWN ACT could be off to a great start. In other parts of the Internet, however, pornographic deepfakes were thriving.

Creating a high-fidelity pornographic video or image with someone’s likeness is cheaper and easier than ever. Major AI products, such as Grok by xAI, have been found to comply with lewd user requests. Beyond mainstream AI products, an emerging ecosystem of websites now allow people to provide a reference image alongside a text prompt describing the scene they want to create. Advertisements for these “nudification” websites invite viewers to undress their coworkers, classmates, neighbors, and even their friends’ wives. These tools will return a result in just a few seconds, many of them just for a few cents, even free. Technically savvy individuals looking for less restrictions and more customizability can procure, install, and run AI models on their own computers, offline and hidden away from legal and technical interventions.

Creation is but the first step. There are many online communities where users distribute their prurient creations as well as tips and tutorials on how to improve their “creative” workflows and bypass—often quite easily—the safety measures implemented by AI models and websites. The now-defunct website MrDeepfakes, established in 2018, was among the most notorious given its relatively long existence. But many other online communities, now larger than MrDeepfakes, also capitalized on the skyrocketing popularity of synthetic lookalike pornography.

In a preprint study published to arXiv earlier this year, we identified three online forums where users share adult content: 4chan, Website A, and Website B. The monikers for websites A and B are used to avoid sending new visitors to these two communities, which unlike 4chan, have not been previously studied. Websites A and B boast millions of registered users––by contrast, MrDeepfakes had under 50,000––and likely as many visitors on a monthly basis. Both websites host a variety of sexually explicit content but have dedicated subforums dedicated to the niches they serve, including AI-enabled pornography of real people. The number of new posts and contributors in each of these subforums can be considered a proxy for the supply of deepfake pornography. In the case of 4chan, we identified a subforum where users come to request nudification services, which makes activity in this subforum a proxy of demand.

Notably, the supply and demand across subforums sharply increased after the TAKE IT DOWN’s passage. This was surprising given the criminal implications that came into effect last May. One possibility is that the law and surrounding publicity attracted interest to deepfake pornography, leading new users to discover these communities. Alternatively, newcomers may have migrated from platforms that shut down (e.g. MrDeepfakes) or platforms that tightened their policies, such as CivitAI, an online platform that facilitated the creation of images and videos that was notorious for hosting models that could be used to generate deepfakes. The fact that these individuals could find a home elsewhere demonstrates the resilience of the ecosystem. After all, MrDeepfakes itself sprouted a week after the subreddit /r/deepfakes was taken down from Reddit.

Enforcement set to begin

Under the TAKE IT DOWN Act, each of these forums would be considered a “covered platform” as they meet the criteria of being an online service, applications, or website where user-generated content is the primary feature. This means that, by May 19, 2026, these platforms ought to have a notice-and-removal process whereby victims can request their content to be taken down. The deadline to do this is 48 hours after the receipt of a “valid” request: a written notice including a signature (physical or digital) of the individual (or a representative), sufficient information to locate the media to be taken down, a statement made in good-faith that the depiction was not consensual, and the individual’s contact information. Last year, 4chan added instructions for individuals seeking to take down content under the Act. Despite this, we did not observe any substantial deletions of content, which suggests that not many users have sent removal requests. Website A and B have yet to provide a protocol to handle requests under the Act.

Parallel to US efforts to mitigate nonconsensual deepfakes, the UK began enforcement of the Online Safety Act (OSA) in March 2025. The OSA covers a broader range of online harms, sexual deepfakes being one of them. Covered platforms must assess the risks posed to users and implement proportionate measures to prevent users from encountering illegal content. In April, Ofcom determined 4chan a covered platform and demanded a copy of its risk assessment. Later that year, 4chan was fined and an investigation was launched against Website A.

The TAKE IT DOWN Act is not without criticism. Organizations like the Electronic Frontier Foundation (EFF) have pointed out that malicious actors could weaponize takedown requests as a tool to suppress speech. As of today, this does not appear to have happened. Likely because, as a whole, the Act does not seem—as yet—to have inhibited the dissemination of deepfake pornography. As our paper finds, through 2025, the ecosystem as a whole only seemed to expand, despite users’ seeming awareness of the criminal nature of their acts.

Perhaps deterrence will increase as enforcement takes place. The first criminal conviction involving the TAKE IT DOWN Act took place last month. Victims of nonconsensual deepfakes, across ages, experience tremendous harms: emotionally, socially, and physically. Prior to the TAKE IT DOWN Act, the US had a patchwork of legislation across states. Criminalizing the sharing of non-consensual sexually explicit imagery is an important regulatory achievement. The question is whether adequate enforcement will follow.

Authors