How AI Hype Masks the Exploitation of African Workers

Marché Arends, Kathryn Cleary / Mar 25, 2026This post is part of a series on Hype Studies that will appear on Tech Policy Press in 2026. More from the series is here.

Categorize by Pauline Wee & DAIR / Better Images of AI / Creative Commons 4.0

AI hype is the next chapter in the colonial playbook. It reframes the exploitation of African digital workers as “innovation” and is a tool of power wielded by profiteers of colonial extractivism in the digital age. It functions as a carefully crafted cover story by disguising appropriation in the language of “progress.”

We know this is true because, as investigative journalists, we spent eight months chasing the wrong story—more on this later.

What is AI hype?

We frame AI hype as the promotional messaging and institutional ideology that casts artificial intelligence—particularly so-called “superintelligence”—as “inevitable” and destined to bring historic “transformation.”

In practice, it looks like xAI founder Elon Musk telling staff in 2025 that Artificial General Intelligence (AGI)—systems that supposedly match or surpass human cognitive abilities—could be achieved as soon as 2026.

It looks like OpenAI founder and CEO Sam Altman blogging in 2024 about how AI will magically improve everyone’s lives: “With these new abilities, we can have shared prosperity to a degree that seems unimaginable today,” he writes. “In the future, everyone’s lives can be better than anyone’s life is now.”

It also looks like you.com founder Richard Socher proclaiming in 2023 that “artificial intelligence will disrupt every industry that can collect any kind of data and has repetitive processes.”

Of course, this is one type of hype. We acknowledge that hype itself can be mobilized as a positive force in society. As members of the Fourth Estate, our focus is on accountability. This means we perceive hype as a tool or mechanism of power and control. And we are interested in how it is used by powerful actors to simultaneously influence public belief and mask exploitation.

Is precarious gig work the new face of colonial extraction?

During our year-long investigation, supported by the Pulitzer Center and published by Africa Uncensored, into the opaque and confusing world of micro-tasking, we spoke to African digital workers—from Nigeria, to South Africa, to Kenya—who train Large Language Models (LLMs) like ChatGPT.

Micro-tasking is similar to outsourcing but specific to the AI economy—hundreds of thousands of digital workers (also known as “gig” workers), mostly from Majority World countries, are tasked with refining the answers of LLMs and guiding them toward more sophisticated behavior. Their job is to correct errors, shape responses and, at least seemingly, “teach” the models how to perform.

Here we need to emphasize: LLMs don’t actually ‘think’, but depend on carefully curated training data that requires human insight and oversight at every step of the development process.

Each person we spoke with had a unique story but a singular, rather eerie, thread ran through them all: Extractive practices underpin LLM training—wages are held below subsistence levels; AI tutors, annotators, and moderators are treated as disposable; and African expertise is appropriated without recognition. This echoes colonial economies of dispossession.

This phenomenon has been described by others in the field. There are many excellent examples of AI accountability journalism, but the series called AI Colonialism by Karen Hao, published by the MIT Technology Review in 2022, is work that greatly impacted our reporting.

“The AI industry does not seek to capture land as the conquistadors of the Caribbean and Latin America did, but the same desire for profit drives it to expand its reach,” writes Hao in her introduction to the series. “The more users a company can acquire for its products, the more subjects it can have for its algorithms, and the more resources—data—it can harvest from their activities, their movements, and even their bodies.”

The initial hypothesis for our investigation centered on this sought-after data that Hao mentions. We posited that the recruitment process undertaken by African digital workers to land gigs on micro-tasking platforms was actually a front for stealing data to train LLMs for free. We disproved this hypothesis eight months into the investigation after learning that the application tests for AI tutors are standardized.

So, we pivoted, realizing we needed to build on the work of Hao and many other incredibly smart people, rather than parrot it. That meant going one step further than drawing parallels between the colonialism shaped by imperialists and the labor pipelines scaffolding AI development. It meant asking: Which forces sustain and perpetuate these oppressive hierarchies?

We shifted the focus from the hoarding of subjects for algorithms to the hoarding of digital workers, many of whom, according to our reporting, sat for months at a time without any tasks to complete even though they had been onboarded. (It’s important to note here that, generally, digital workers are not paid hourly but rather per accurately completed task. No tasks means no chance at earning.)

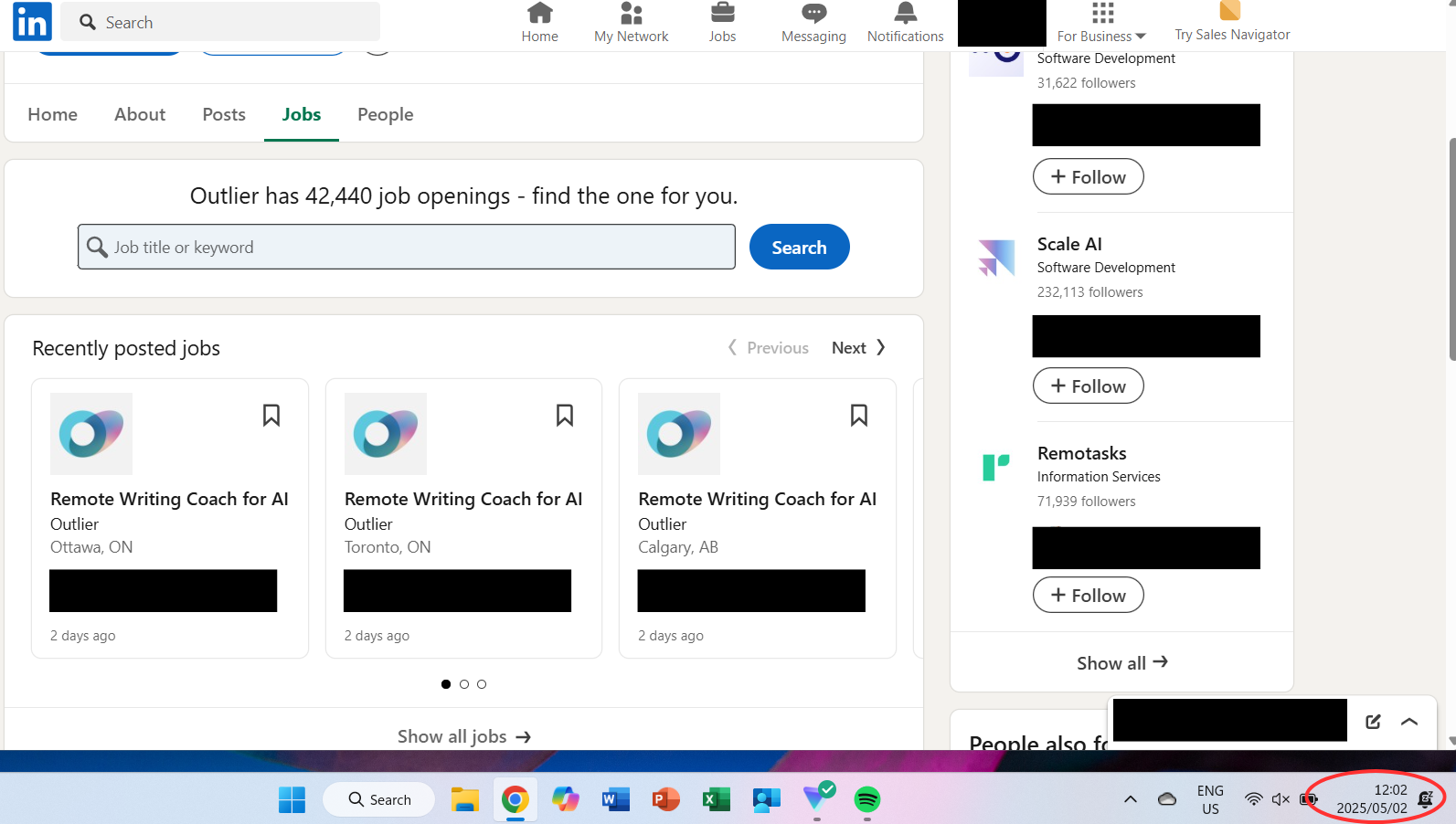

Despite the dearth in tasks, we watched as micro-tasking companies across the board staged recruitment drive after recruitment drive. We saw a particularly severe example of this on 2 May, 2025, when Outlier—a subsidiary company of Scale AI and a micro-tasking behemoth—had over 42,000 jobs advertised on LinkedIn.

Screenshot provided by the authors.

What does mass recruitment of gig workers have to do with AI hype?

For a year, we studied the mass recruitment strategies of micro-tasking companies, like Mindrift—a subsidiary company of Toloka, which used to be owned by Yandex—to win training contracts with Big Tech firms, like OpenAI. It was tricky to figure out at first, but once we did, we realized it was a standard copy‑paste strategy: hire en masse despite knowing there is not enough work, to create the illusion of scale. Why? Because scale is a signal to investors to keep pouring huge sums of money into AI development.

In our investigation we describe this practice as “labor hedging,” or a form of corporate bench-warming. In other words: signaling abundance to investors, without guaranteeing work, in order to drive profits.

Mindrift never uses the term “labor hedging” but makes no secret about the strategy being central to its business model. A Mindrift blog post from February 2025 actually breaks it down pretty well, saying: “This ensures that if, for example, Company A reaches out asking for 50 cybersecurity experts, we’ve already found, vetted, and prepared the right candidates for them.”

This is speculative capitalism in action. In this system, value isn’t based on what a company delivers today, but on the promise of what it might deliver tomorrow. Idle workers become proof of “capacity”—a kind of collateral that reassures investors the company can grow at a moment’s notice. That illusion feeds hype, and hype inflates stock market value, transforming precarious labor into investor confidence and keeping the AI industry awash with capital.

In the end, human labor is leveraged not for the work it produces, but for the spectacle of abundance in a gambling game of epic proportions—all in feverish pursuit of something that experts say might not even exist: “superintelligent” AI.

Is AI hype the new colonial frontier?

When we examined rhetoric about AI in the public sphere—Altman saying that everyone’s lives will be better, Mindrift advertising thousands of gigs but refusing to promise work, and the dominant narrative in the discourse today about AI that says: ‘This is a super, fantastic, brilliant, very good thing even though we have little evidence to show you why’—we saw a clear pattern.

Again and again, those with power insist on repeating and reinforcing the narratives of “inevitability” and “transformation” that ultimately benefit an elite few, while masking the absence of strong evidence and the persistence of exploitation. It is less a vision of the future and more a promotional ideology designed to consolidate power.

This is a historical pattern. And what history teaches us is that patterns become trends, trends become systems, and systems endure because those who gain from them fight hardest to keep them intact. Today, the battlefield is social media, where tech CEOs, politicians, investors, and their institutional amplifiers fire off declarations of “inevitability” and “transformation” at whim.

Declaring AI inevitable is how hype becomes colonial power. The rhetoric of inevitability, in particular, stages hype as a manifest destiny, stripping away the possibility of refusal or alternative futures. This is the colonial frontier of AI: a terrain built not only on land (think: data centers literally draining indigenous communities of natural resources) but also on language, where enchanting words discipline workers into precarity, convert human labor into a symbolic collateral for speculative value, and disguise extraction as “progress.”

A platform like X, where hype is staged and circulated, for example, then becomes the battlefield of narratives where this frontier is fought over. “Inevitability” becomes the weapon that makes exploitation appear natural, necessary, normal, and even aspirational.

As investigative journalists, we work from a conviction: awareness and understanding restore agency. Our reporting seeks to sharpen that awareness and deepen that understanding so the communities we serve can shape their own futures—rather than being led into one designed without them in mind.

The colonial project never ended—it just got better PR.

Authors