Whistleblower Documents Prompt Questions about Twitter’s Approach to Election Integrity

Justin Hendrix / Aug 25, 2022Co-published with Just Security.

What a difference two weeks can make.

Back on Thursday, August 11, Twitter announced– in a series of tweets– that it would begin enforcing its “Civic Integrity Policy” in the context of the approaching U.S. midterm elections. A blog post marking the announcement described newly redesigned labels on posts containing misinformation. The labels, called “prebunks,” are intended to “get ahead of misleading narratives on Twitter,” and they work in tandem with information “hubs” to share state-specific information about elections. “Twitter plays a critical role in empowering democratic conversations, facilitating meaningful political debate, and providing information on civic participation – not only in the US, but around the world,” the company said. “People deserve to trust the election conversations and content they encounter on Twitter.”

Then came the whistleblower.

On Wednesday, August 23, documents revealed by a whistleblower – former Twitter head of security Peiter "Mudge" Zatko – suggest that contrary to its stated intent, Twitter chronically under-resources efforts to protect the discourse around elections on its platform, and that the problem is far worse abroad than it is in the United States. The documents were first reported by the Washington Post and CNN. The Senate Judiciary Committee issued a subpoena for Zatko to testify next month.

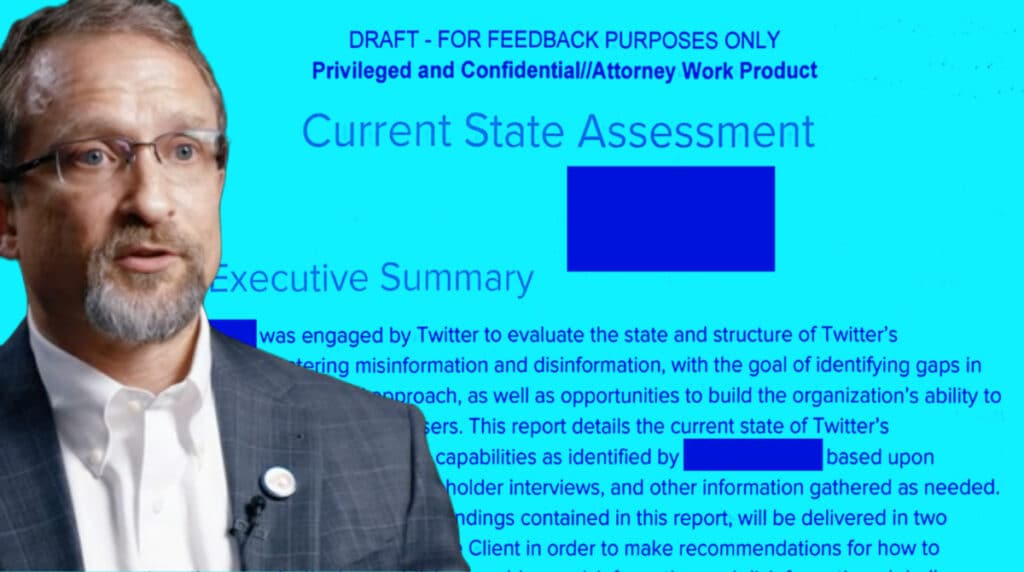

One of the documents Zatko revealed is a redacted version of a report titled “Current State Assessment” — prepared for Twitter by the Alethea Group, a consultancy. The report describes “the current state of Twitter’s misinformation and disinformation capabilities,” and identifies a variety of shortcomings in the company’s approach to these problems. With regard to elections, it describes three key areas of concern:

- Human resource issues, including understaffing, organizational challenges, and morale problems;

- A deficit of expertise, in particular on languages and cultural nuances necessary to mitigate threats outside the United States;

- Engineering challenges, such as substandard tools, the complexity of legacy systems and concerns about security.

While Twitter has likely taken some steps to address these issues since Zatko left the company in January 2022, the challenges surfaced in the whistleblower documents may be useful to congressional investigators and civil society groups seeking assurances that Twitter is prepared for the 2022 midterm elections cycle in the United States, and for representatives of other governments abroad with similar, if not greater, concerns.

People Problems

The Alethea Group report, which is based on internal Twitter information and stakeholder interviews, indicates that civic and election integrity teams are understaffed and overworked. The report explains that this was a particularly acute problem in the period of the 2020 U.S. election, which saw the company rely on “100 full-time staff” across Twitter’s site integrity and safety teams, as well as “volunteers from other parts of the company” brought together under what the company dubbed the "Election Squad" framework. Employees that focused on the 2020 election described burnout, while senior managers reported an expectation that they be “always on” during the election cycle to respond to any issue that might escalate, particularly with regard to influential accounts.

The 2020 US election was so resource-intensive that the company did not have the staffing necessary to properly support the launch of other products. For instance, the debut of Fleets – the short-lived, ephemeral images feature on Twitter – was delayed until after the election because the company was so consumed by addressing election misinformation. And, there were organizational issues and communications problems across departments. As another indicator of poor organizational design, the report says that the “organizational structure within Twitter that responds to disinformation and misinformation is siloed and not clearly defined,” due to its ad hoc evolution over time.

It’s Bad in the U.S., But Much Worse Abroad

While the 2020 U.S. election may have pushed Twitter’s safety and site integrity teams toward a near breaking point, the report plainly states that the company “lacks the organizational capacity in terms of staffing, functions, language, and cultural nuance to be able to operate in a global context.” The company has a bias toward the English language and English-speaking countries, so the problem is particularly acute in Africa, Latin America, and Asia. For instance, the Alethea Group found that Twitter was unable to provide “even a scaled-back version of the election support that was deployed for the US 2020 election” for the July 2022 Japanese election, which was identified as a priority by the company. An internal document found that there were "no Japanese speakers on the Site Integrity team, only one [Trust & Safety] staff member located in Tokyo, and severely limited Japanese-language coverage among senior [Twitter Safety] Strategic Response staff."

The U.S.-centric approach means policies on mis- and disinformation “are often made in response to US-based events, such as the 2020 presidential election, QAnon content on the platform, manipulated media of House Speaker Nancy Pelosi, and more,” and thus “they often do not take into account different ongoing misinformation or disinformation campaigns in other parts of the world.” A patchwork of teams with different criteria decide which global elections are worth paying attention to, but even those designated “top tier” may not receive commensurate resources. The report documents the frustration of Twitter’s team in Brazil, for instance, who unsuccessfully sought more attention during the 2020 election cycle in that country with an information system affecting a population of over 210 million people.

“Hacked together”

Just as human resources and organizational structures devoted to addressing election integrity issues were developed on an ad hoc basis, the legacy systems and tools the company relies on to address election mis- and disinformation also have significant shortcomings. At the time of the report’s completion, the situation was in part a result of organizational constraints: site integrity teams had to “request assistance from engineering teams in other parts of the company to do things like implement even small updates to existing tools or build new ones that could automate more of the process for both policy and investigative analysts.” Engineering teams were not required to work with the site integrity teams, so such requests were put on a waitlist. This meant the teams combatting election falsehoods relied on “manual and outdated tools, and individual know-how of its analysts who often must code their own solutions to complete their work.”

The process of labeling misinformation was itself complex, according to the internal documents, which detailed that "the process of applying labels is cumbersome," "requires the use of backend interfaces," and the "complex steps involved make scaled application of labels difficult to expand beyond a very small group of highly trained agents." While preparing the report, an Alethea Group representative “found that no less than five different tools were needed in order to label a single tweet.”

Questions for Lawmakers

Following the disclosure of the whistleblower documents, U.S. lawmakers were quick to issue letters raising security concerns and requesting the Federal Trade Commission consider whether the company may have violated its 2011 consent decree. The Senate Judiciary Committee seeks Zatko’s testimony on Twitter’s security failures and “foreign state actor interference” on the platform. But the documents also prompt questions congressional investigators and legislators in other countries should ask about what the company is doing to protect elections specifically.

Another congressional body that may be interested in Zatko’s disclosures on that subject is the House Select Committee investigating the January 6, 2021 attack on the U.S. Capitol, which included anonymous testimony from another Twitter whistleblower in its July 12 hearing earlier this summer. The documents released by Zatko indicate he had a particular concern that there might be “acts of internal protest [within the company] aligned with the rioters,” which caused him to query how to limit employee access to Twitter’s production environment. According to the whistleblower complaint filed with the Securities and Exchange Commission and sent to Congress, when he looked into systems for such internal security threats, “he learned it was basically nothing. There were no logs, nobody knew where data lived or whether it was critical, and all engineers had some form of critical access to the production environment.” What gave Zatko reason to fear such actions by a rogue employee, and have sufficient steps been taken to secure these systems?

What’s more, on Aug. 26, 2021, the Select Committee sent a letter to then Twitter CEO Jack Dorsey requesting copies of any reviews – internal or external – relating to “[m]isinformation, disinformation, and malinformation relating to the 2020 election” produced since April 1, 2020, as well as a variety of other information, including regarding the activities of violent extremists on the platform. The Alethea Group report makes numerous references to a Twitter document called “US 2020 Civic Integrity Policy/Ops/Product Reflections." Was this document provided to the Select Committee? For that matter, was the Alethea report, the “subsequent report” it promises or any of the other “19 internal documents, retrospectives, and training guides” it references provided to the committee?

More broadly, though, lawmakers in the U.S. and in other democracies need to understand in more detail how social media platforms such as Twitter organize themselves, both in terms of human and financial resources, to mitigate mis- and disinformation in the context of elections. What is the appropriate level of spending or investment in labor necessary to ensure citizens can “trust the election conversations and content they encounter on Twitter,” as the company has promised? And how can a country know if its election cycle has been designated worthy of receiving the attention of Twitter’s site integrity and safety teams, and if the company has appropriate language and cultural expertise to meet the challenge?

Establishing a baseline for what is an appropriate investment is difficult. But years into what is now a chronic problem, social media platforms such as Twitter can no longer be permitted to respond to elections as ad hoc “events.” “Twitter is a critical resource to the entire world,” Zatko told CNN. “Your whole perception of the world is made from what you are seeing, reading and consuming online. And if you don’t have an understanding of what’s real and what’s not -- yeah, I think this is pretty scary.”

Authors