Social Cohesion Technologies and Online Projects

Tim Bernard / Apr 3, 2023Tim Bernard recently completed an MBA at Cornell Tech, focusing on tech policy and trust & safety issues. He previously led the content moderation team at Seeking Alpha, and worked in various capacities in the education sector.

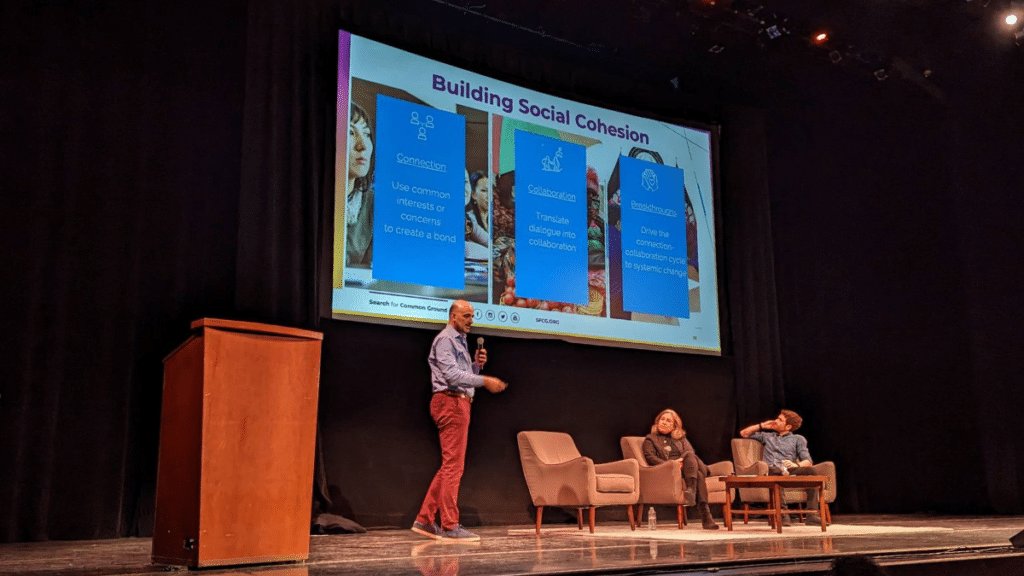

The Designing Tech for Social Cohesion conferencethat took place in San Francisco in February showcased a range of online projects and technologies that could fall under the umbrella of PeaceTech, each relevant—in varying ways—to the endeavor of reducing toxic polarization and building social cohesion. The examples that were presented at the conference are described here, categorized into:

- Components that can be integrated into actual products;

- Tools for peacebuilders, whether for government research, full-scale endeavors run by teams of professionals, or for enthusiasts trying to have better discussions across political designs;

- Complete peacebuilding projects that make substantial use of technology.

(A different classification of PeaceTech projects can be found in Part VIII of Dr. Lisa Schirch’s article, “The Case for Designing Tech for Social Cohesion: The Limits of Content Moderation and Tech Regulation.”)

Basic components

- Perspective API (Google Counter Abuse Technology team / Jigsaw)

Perspective API is a machine learning classifier that scores submitted text for toxicity and a number of other anti-social attributes. It was created with web comments sections and other online text-based conversation forums in mind, motivated by the premise that abusive comments silence the voices of others, excluding them from the conversation. Perspective API is used by comments platforms like Disqus and direct publishers like The New York Times, as well as at Reddit. The main use-cases are: automatic flagging of comments for moderators to review; giving commenters an opportunity to choose less inflammatory language; and enabling readers to hide comments scored as toxic. Future directions for the project include finding ways to incentivize actively constructive conversation.

- Bridging Algorithms (Aviv Ovadya, Affiliate, The Berkman Klein Center for Internet & Society at Harvard University)

Social media platforms, such as Facebook, Instagram, YouTube and TikTok and Twitter’s “For You” pages, rank or recommend content using algorithms that optimize for, primarily, “engagement,” which is taken to be the revealed preferences of the users. There is evidence that this promotes and incentivizes the creation of polarizing content. As opposed to insisting on platforms presenting algorithmically unranked content only (e.g. chronological feeds), Ovadya has designed and advocated for using bridging algorithms as an alternative. These promote content that is approved of by a critical mass of people on each side of a political divide, and thereby, social media platforms could potentially not only cease worsening toxic polarization, but actually actively contribute to social cohesion. Remesh and Polis (including its adaptation in Twitter’s Community Notes), detailed below, are examples of using bridging algorithms outside of typical social media feeds.

- Online Dispute Resolution (ODR.com, National Center for Technology and Dispute Resolution at UMass-Amherst, International Council for Online Dispute Resolution)

Online Dispute Resolution (ODR) takes the insights of conflict resolution theory and practice and uses them to inform the design of platforms where conflict occurs to facilitate conflict resolution at scale. The first major implementation of ODR was on eBay, and ecommerce has continued to be a focus of the practice, though it has even been applied in context of international conflict. Technology is effectively a mediator, providing a structure for parties in conflict to find resolution. To make this mediation effective, design decisions—such as providing discrete checkbox reasons for why a buyer is unhappy with their purchase rather than an open text field where they might vent their displeasure—can incrementally encourage empathy and build relationships between parties in conflict.

Tools for peacebuilders

- Information gathering tools

Phoenix is a process for social media research with a suite of social media listening tools designed for peacebuilding projects. The process is designed to answer the questions: What can be mediated? and What can be addressed through grassroots peacebuilding? Phoenix’s tools gather data from the platforms, organize it, label it using ML classifiers, and generate visualizations.Though other commercial tools are available to analyze social media and surface insights, these are mostly designed for marketing purposes, and have not proved suitable for peacebuilders. Uses so far have included: monitoring trends that can lead to violence in Lebanon; identifying polarizing identity issues in Kurdistan; surfacing county-level differences in hate speech around elections in Kenya; and understanding narratives about violence on the Somaliland-Puntland border. Currently, BuildUp facilitates implementation of the Phoenix tools, but they are now working on making them self-deployable and open-source.

Polis is an open-source platform to gather and make sense of opinions at scale using machine learning. It was inspired by decision-making techniques adopted by the Occupy movement and is focussed on providing a resource for deliberative democracy. It has been used by government agencies around the world, including in Taiwan to solve a conflict regarding the impact of Uber on the local taxi industry and by the UK Department of Education for internal opinion gathering. Polis’s platform works by users submitting short comments and voting on the comments of others. Polis then determines what the different opinion segments are and, using a bridging algorithm, identifies the comments that are rated highly by reasonable numbers of individuals in opposing factions, thus providing some concrete information about where common ground can be found. Polis served as a key inspiration for Twitter’s Birdwatch program (now “Community Notes”).

Remesh is, like Polis, a platform that uses an algorithm to identify “bridging statements” across factional divisions amongst a large group of participants. Remesh, however, is more like an advanced focus group, conducted live, where the facilitators can see the surfaced responses in real time and ask follow-up questions. It has a polished, user-friendly interface and is used for commercial purposes such as market research as well as for civic benefit. Remesh has facilitated conversations sponsored by the UN to understand where the local population stands on polarizing topics in countries such as Iraq, Haiti, Bolvia, and in Libya, where the session was broadcast live on television.

- Tools for individuals

Karin Tamerius is a political psychiatrist who has investigated how to change incentives to get “trolls,” i.e. those who communicate in a polarizing vernacular, especially online, to act prosocially. She has created a number of chatbots that are intended to train individuals how to follow the best practices that she has developed to engage with trolls constructively, rather than ineffectively resorting to coercion or ostracism.

Those on either side of the partisan divide in the US very often believe that those on the other side are more likely to have extreme beliefs than is actually the case. This leads to erroneous and harmful assumptions that the divide between them is unbridgeable. The Perception Gap is an online quiz that measures how an individual understands the views of those with differing political affiliations and then compares that to the reality. For the vast majority of participants, they will discover that those across the aisle are less extreme than they previously thought, and they will hopefully see more potential in engaging with them in the future.

- Measuring polarization

The Social Cohesion Impact Measures (SCIM) were designed to measure the impact of bridge-building programs in the US. They are applied using pre- and post-intervention surveys and an automated analysis tool. The political scientists developing SCIM first sought to measure affective polarization, and then, after consultations with the pilot clients, added other civic health factors, including: intellectual humility, intergroup empathy, pluralistic norms, humanization and moral outrage.

While not a technology per se, Mercy Corps has developed a framework and survey for use around the world, focusing on building social cohesion that reduces conflict and advances resilient peace. Mercy Corps currently measures six dimensions that appear to be most relevant to the desired outcomes: trust, belonging, civic engagement, collective action norms, shared identity, attitudes toward other groups. The scores are presented on a multi-dimensional chart, at both aggregated and segmented (e.g. individual village) levels to show variations between measures and between segments and identify areas of strength and weakness.

Online Peacebuilding Projects

In Sri Lanka, mainstream media very often contains racial and gender bias, which impedes its potential as a space for society-enhancing information and discussion. Ethics Eye monitors a range of major news sources and holds them accountable by posting examples of bias on Facebook and Twitter. Despite the drawbacks of discourse on these platforms, the problems in mainstream media sources are a much more salient issue in contemporary Sri Lanka. (Verité Research also has five other online projects monitoring the government and media in Sri Lanka.)

Soliya is a peacebuilding not-for-profit organization that operates its programs on its own online video platform, bringing people from across the world together across lines of difference. They designed their platform to best facilitate dialogue, insist on small groups with trained facilitators, and employ neuropsychologists to measure program impacts. Programs primarily serve young adult populations and aim to build cross-cultural communication skills.

- Supplementing Intergroup Dialogue on Facebook (Arik Segal, Conntix)

Arik Segal has used Facebook groups to continue the impact of the in-person Cognitive Behavioral Therapy-informed dialogue groups for Israelis and Palestinians that he runs. After the main dialogue sessions have ended, re-entry into their communities used to result in their impacts waning quickly. Now,

participants join a Facebook group for their cohort to keep them connected. Segal uses various affordances of the platform to continue the facilitation. He is currently developing an AI facilitator that could be incorporated into a platform to enable continuous moderation of large numbers of cohort groups.

Transitional platforms

Gatheround facilitates workplace conversations in a platform designed with peacebuilding concepts at the forefront. They provide a library of templates for employees to engage with each other in more compelling and effective ways than using basic video meeting platforms, using features like keeping groups small, lack of self-view, and guiding participants to step up or step back to ensure that all voices are heard.

Slow Talk was started by a co-founder of Soliya, and is also currently focused on providing tools for workplaces to engage employees in conversations, though it aspires to grow beyond that market with its mission of deploying dialogue at scale. Its programs begin with a prompt from an internal leader or external expert followed by a structured group audio discussion. Metrics and insights are extracted from the conversation and provided to the organizer. The higher-level business concept is to provide users with lasting bonding experiences, rather than short-term dopamine hits.

Bonus: What one metric would you optimize for?

UC Berkeley’s Jonathan Stray served as moderator for one panel. He asked the participants what they would design a social media feed algorithm to optimize for. These were the responses:

- Ravi Iyer, Psychology of Technology Institute, USC Marshall : Meaningful experiences in last 30 days

- Julia Kamin, Civic Health Project: Addressing civic alienation

- Adrienne Brooks, Mercy Corps: Trust

- Emily Saltz, Jigsaw: Satisfaction

- Jonathan Stray: Being heard

Authors