Researchers Detail Use of Bots to Quash Russian Opposition, Cheerlead for Putin

Justin Hendrix / Feb 22, 2022Last week, as tensions increased on the Ukraine-Russian border, Ukraine’s Security Service (SBU) said it had disrupted a network of “bot farms” and seized technology assets that were supported by Russian online services in five Ukrainian cities. According to the news service RFERL, the announcement stated that the network was used to pump “fake information about the situation in Ukraine, incite protests, scare the public, send notices of bomb threats at critical infrastructure sites,” in addition to trolling Ukrainian government officials.

The use of such tactics attributed to the Russian regime is, at this point, unsurprising. But what is arguably less well understood is how the Russian government and its domestic allies employ bot networks to help quash opposition domestically. In a new paper published this week in the American Political Science Review, researchers from Russia's HSE University, Princeton and New York University detail the effects of pro-regime bot networks on political communication in the Russian Twittersphere.

“Automated bots are cheap, hard to trace, and can be deployed at a very large scale,” said study coauthor Joshua A. Tucker, an NYU professor and co-director of the Center for Social Media and Politics (CSMaP). “This makes them an easy way for regimes to inflate support and manipulate the information environment, and for other political actors to promote certain policies and signal loyalty to the regime.”

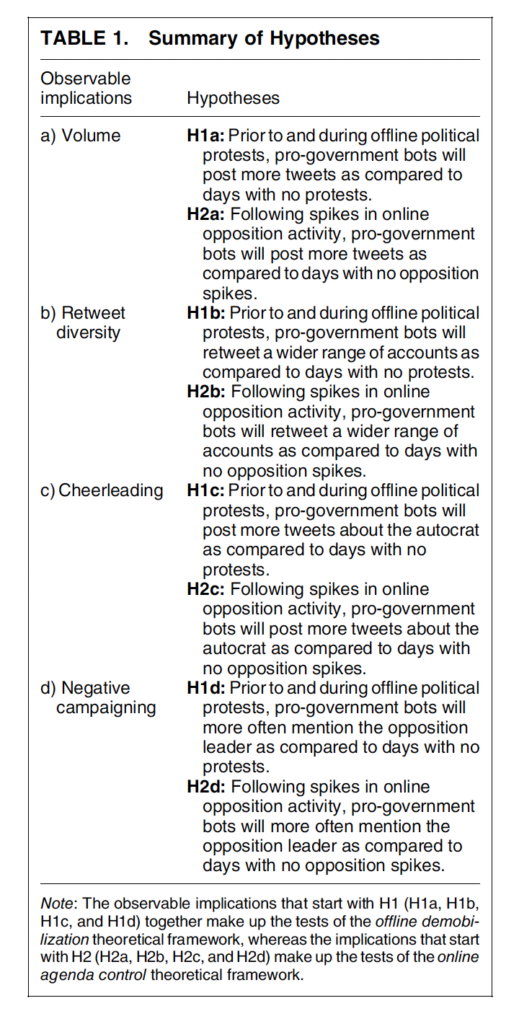

Noting that "academic research on the ways authoritarian regimes use social media bots, defined as algorithmically controlled social media accounts, in the context of domestic politics is scarce," the researchers set out to "bridge the gap between scholarship from the field of computer science on bot detection and research in political science on authoritarian politics by reverse-engineering the use of social media bots in a competitive authoritarian setting and identifying specific political strategies that can be pursued with the use of bots." They developed "testable hypotheses about the way social media bots can be used to counter domestic opposition activity either online or offline."

Prior research on authoritarian regimes indicates they regard control of the information environment as important for a number of reasons- including to maintain the "widespread belief" that any opposition is weak, unorganized, and bears risks for those engaged in it- including to their physical security and the possibility of future persecution. But while silencing critics and amplifying your preferred message is standard in the authoritarian playbook, that can be harder to do "in times of large-scale collective actions" such as during anti-regime protests. At these times, regimes and their domestic allies may engage in various tactics to increase "the expected costs of getting involved in offline or online opposition activities," thus protecting the regime's interests.

This led the researchers to look specifically at the "observable implications for bot behavior during offline protests and increased online opposition activity," using a database to identify offline protests and activity related to 15 specific Twitter accounts belonging to activists, journalists and media entities that demonstrated support for the Russian opposition, all between 2015-2018. These accounts included, for instance, the independent blogger Rustem Adagamov; the exiled oligarch Mikhail Khodorkovsky; the former Russian politician, Putin critic and activist Boris Nemtsov (assassinated near the Kremlin in 2015); and the account for Dozhd (TV Rain), an independent media entity declared a "foreign agent" by the Kremlin last summer. To collect a sample of bots, the researchers used a tool specifically designed to detect bots on Russian political Twitter, and then measured the political orientation of the bots, netting 1,516 pro-government Twitter bots and over a million tweets. These data allowed the researchers to test four main hypotheses:

The researchers were able to conclude that "pro-government Twitter bots tweet more and retweet a more diverse set of accounts when there are large street protests or opposition activists post an unusually large number of tweets," confirming the hypotheses that the bots respond to volume and increase their retweet diversity in such moments. But the results around the other hypotheses challenged their assumptions. For instance, with regard to "cheerleading," "the expected number of tweets that mention Vladimir Putin is" in fact "smaller on protest days than on days without a protest." The bottom line, though, is that these bot networks can indeed produce meaningful shifts in the volume and sentiment of political tweets in moments when opposition activity is pronounced.

While the overall effect on political outcomes is difficult to determine, the researchers conclude that "social media bots constitute a sort of 'universal soldier' that can be activated for various purposes and in a variety of situations," part of the digital toolkit for authoritarian regimes, alongside other tactics such as disinformation campaigns, denial-of-service attacks and forcing internet service providers to block or censor online content.

“In a 2018 article, my colleagues and I argued that authoritarian regimes have three ways to respond to online opposition: response offline (arrests, violence, change laws); restrict access to content, or try to change the conversation online)," said Tucker. "China was known for pioneering ways to restrict access to content online, whereas Russia was being innovative in terms of trying to change the conversation online. Having bots that assist with regime goals are very much inline with this third category of trying to change the nature of the conversation online."

Authors