On Elon Musk's Vision of Twitter as a Hive Mind

Joe Bak-Coleman / Nov 3, 2022Dr. Joe Bak-Coleman is an associate research scientist at the Craig Newmark Center for Journalism Ethics and Security at Columbia University.

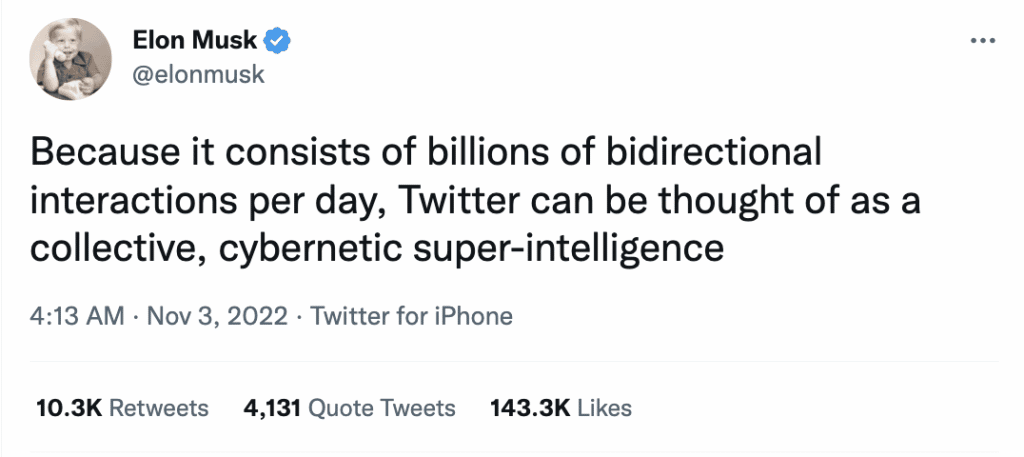

Elon Musk recently tweeted that Twitter, the social media platform he now owns, can be thought of as a “collective, cybernetic super-intelligence” because it consists of “billions of bi-directional interactions per day.” I tend to steer clear of the Musk discourse, but as a researcher who studies complex systems and collective behavior, this particular utterance caught my attention.

The notion that a social media platform may represent a novel form of human intelligence is appealing because in many ways it is familiar. As a species, we have a long history of imagining the superhuman potential of collectives. This idea of collective intelligence or cognition is quite old, stretching back to (at least) to Aristotle. In Politics IV, he wrote:

For the many, of whom each individual is but an ordinary person, when they meet together may very likely be better than the few good, if regarded not individually but collectively, just as a feast to which many contribute is better than a dinner provided out of a single purse.

This thinking can be found everywhere, from the rationale for collective systems such as juries and democracies, to recent popular science books about harnessing the “wisdom of the crowd.”

Rather than a potluck, Elon draws on the analogy of a brain—millions of neurons (not realizing they’re neurons, of course) make up brains capable of navigating an uncertain world and effectively deciding how to act. Given many of our largest challenges involve failing to act collectively as a global society, the idea that we can convert our scattered behavior into something coherent is tantalizing…

Back to Aristotle. A few paragraphs after the quote above, he reflects on the fact that collective intelligence does not seem to be guaranteed in all contexts. He writes:

Whether this principle can apply to every democracy, and to all bodies of men, is not clear. Or rather, by heaven, in some cases it is impossible of application; for the argument would equally hold about brutes; and wherein, it will be asked, do some men differ from brutes?

Put differently, under what conditions can we expect a group of individuals to act cohesively and effectively as a collective, and in what circumstances does human behavior prevent such action? I believe this is a defining question for science in the coming decades, as we develop systems that alter our interactions with one another and with technology, all the while facing challenges like climate change, pandemics, and war that threaten our existence as a species.

Of course, the question isn’t wholly unanswered. We know that our minds function as a consequence of millions of years of natural selection shaping the structure of the brain to promote functioning and decision-making. And, we know that the number of neurons and connections between them is not what makes brains work, it’s how those neurons interact.

Yet neurons are ultimately a poor analogy for individual human behavior. As a collection of cells with identical DNA bound to live or die together, neurons share a common goal and have no reason to compete, cheat, or steal. Even if we had a full model of how the brain works, we couldn’t directly apply it to human behavior because it wouldn’t account for competitive dynamics between humans.

To understand how collective behavior can occur among selfish individuals, we might do well to start by looking at animal groups. Animal groups have a profound capability to accomplish collective action. Even if they're unrelated and in competition. Schools of fish dazzle and evade predators. Flocks of birds navigate vast distances. Zebras trade off guard duty so they can take time off to eat.

The success of animal groups might seem to bode well for our potential to work on collective concerns. If they can do it, surely we can as well. We're smarter and such, have electricity and make rocket-ships. Of course, the devil is in the details.

Over the last several decades, collective behavior scientists have truly begun to understand how animal groups achieve these tasks. It's remarkably complex, but themes emerge: groups are constrained in size or modular+hierarchical, and attention is paid to only a few neighbors, etc. By contrast, a “global community” on social media with no such constraints could potentially be counter-productive.

Just like the brain, the functioning of these groups is entirely dependent on their structure. Simple models of collective behavior in animal groups recover their functional properties. We understand how interactions enable collective navigation, predator avoidance, and decision-making.

Through this, we’ve learned how remarkably sensitive emergent behavior can be to the structure and nature of interactions between individuals. Changes to the network size or structure, altering how information is shared, or adding feedback tends to degrade collective behavior into failed states.

So, Elon’s premise that Twitter can behave like a collective intelligence only holds if the structure of the network and nature of interactions is tuned to promote collective outcomes. Everything we know suggests the design space that would promote effective collective behavior at scale—if it exists—is quite small compared to the possible design space on the internet.

Worse, It might not overlap with other shorter-term goals: profitability, free speech, safety and avoiding harassment... you name it. It's entirely possible that we can't, for instance, have a profitable global social network that is sustainable, healthy, and equitable.

What happens then? What if the sustainable, equitable and healthy design conflicts with values and profitability in the short term? What if it requires accepting harm to a large group of people? Do we accept a broken world later for X now?

Unfortunately for Musk, this is not rocket science. While we have very clear mathematical models of orbital trajectories and decades of experience sending people into space, we have no such understanding of how to arrange and coordinate human behavior at scale in a way that doesn't send us into the ocean (perhaps literally). We only know enough to know it's hard.

When it comes to Elon Musk and his ownership of one of the world’s most important social media platforms, we get lost a lot in discussing whether he's benevolent, or has a political bias. In my opinion, that's all secondary to the fact that he's taken on responsibility for a problem we have no idea how to solve, despite thousands of years of trying.

But perhaps thinking about “cybernetic super-intelligence” is more interesting to Musk than some of the more mundane problems he’ll face as Twitter’s “Chief Twit.” Either way, if he truly believes in the promise of harnessing digital media to produce positive collective behavior, he should expand his commitment to working with researchers that study these questions, and provide them with the resources– both financial and data– to do the work.

Authors