“It’s not you, Juan, it’s us”: How Facebook takes over our experience

Elinor Carmi / Jan 28, 2021Yesterday we learned that Facebook wants to reduce political content on its platform, and is considering changes to its News Feed to accomplish that goal. During a quarterly earnings call, CEO Mark Zuckerberg said that “people don’t want politics and fighting to take over their experience”. But just the day before, the team that manages the machine learning systems that govern the News Feed wrote a post about how users are the ones who decide ‘what is meaningful to them’ on the platform.

Confused? It’s not you, it’s them.

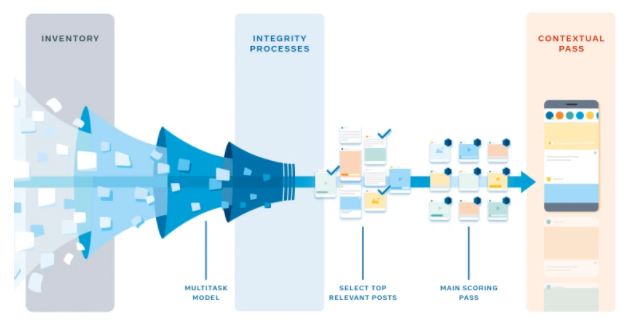

On Tuesday 26th January, executives at Facebook in engineering, data science and product management published a blog post on “How machine learning powers Facebook’s News Feed ranking algorithm.” The post offers “new details of how we designed an ML-powered News Feed ranking system.” Told through the perspective of a hypothetical user named “Juan,” the Facebook team explain how their system works to create “long term value” for Juan, based on a system informed by surveying users about “how meaningful they found an interaction with their friends or whether a post is worth their time” in order to “ reflect what people say they find meaningful.”

Sounds great. But, let's reveal what they don't tell here:

First, Facebook spies on your behavior (whether you are a subscriber or not) within and outside the platform with cookies and pixels to inform the ranking. Its surveillance of user behavior off the platform is extensive and hard to limit, even if you try.

Second, Facebook says they survey people about what is meaningful to them and that this is the primary driver in its model. But as I show in my book, Media Distortions: Understanding the Power Behind Spam, Noise and Other Deviant Media, the platform changed my newsfeed preference from Most Recent to Top Stories against my wishes dozens of times. I also show how the company developed different interface features including Memories and the Audience Selector to influence people’s emotions (nostalgia) or sense of security to drive more engagement. The Audience Selector, for example, was introduced in 2014 and presented as if it gives control to people. But it was an interface design meant to tackle the ‘problem’ of people who self-censor themselves. That brings us to question - what is Facebook's "values (Yijtk)" factor, and how does it factor in other user input that should trump it?

Third, Facebook argues they rank content to create a ‘positive experience’, but as Kevin Roose recently reported, the company has conducted experiments to determine how best to promote civility and has decided ultimately to reintroduce content that had negative effects because removing it made people less likely to come to the platform.

Fourth, another factor that affects ranking is the input of its legion of content moderators, which Facebook didn't really acknowledge until researchers like Sarah Roberts surfaced their work. That means that commercial content moderators are part of the process of ordering and filtering what users engage with. As they argue, without their moderation Facebook would be unusable.

Fifth, as I say in my latest piece for Real Life Magazine on the ways in which content appears on Facebook, "platforms don’t just moderate or filter 'content'; they alter what registers to us and our social groups as 'social' or as 'experience'". Throughout time, the accumulative effect of Facebook is influencing how we interpret the world, and also how we understand what actions are possible. As Siva Vaidhyanathan poignantly says, Facebook “undermines our collective ability to distinguish what is true or false, who and what should be trusted, and our abilities to deliberate soberly across differences and distances is profoundly corrosive.”

Importantly, the main factor that guides Facebook is its economic interest, which leads us to the final point. In its explanation of its system, Facebook completely neglects its core source of existence - advertising! Facebook exists because companies pay money at auction to influence what we will engage with, when & where. The company has demonstrably prized advertising even over its own policies. As The Markup recently reported, despite the company’s claims it would restrict them, partisan political ads ran in Georgia ahead of the November 2020 election based on monitoring of paid user feed through the Citizen Browser Project.

But again, none of this is new:

- 30 June 2020 - Facebook said it will prioritize original news reporting.

- 16 May 2019 - Facebook said it will prioritize Pages and Groups.

- 9 May 2019 - Facebook said it will prioritize videos.

- 19 April 2019 - Facebook said it will reduce “the reach of Facebook Groups that repeatedly share misinformation”.

- 11 June 2018 - Facebook said it will “prioritize posts from friends and family over public content”

- 29 June 2016 - Facebook said it will reduce reach of brands and news sites and prioritize friends.

- 1 March 2016 - Facebook said it will prioritize live video.

These are just the tweaks that Facebook reveals. But the company conducts experiments on our News Feed all the time, without our knowledge or consent. Each tweak represents a change in the business model that is influenced by pressure from policy-makers, investigative journalists, business deals and new products the company wants to launch. None of these changes has to do with any user surveys Facebook conducts, the results of which are never available to users or to the public. All of these tweaks have repercussions on how we understand politics, economy, health and our societies, and they are not necessarily meaningful or positive.

Facebook’s presentation of its ranking as merely computational helps Facebook to make it appear 'objective' and removes the impression it relies on human decision making. But behind what they call “product,” “science” and “engineering” stands a multi-layered online ecosystem that consists of human and nonhuman systems working to filter and order various elements so that we engage longer on the platform according to its definition of sociality. Importantly, it helps conceal the economic rationale that influences every step of this complex procedure.

Don't buy it.

Authors