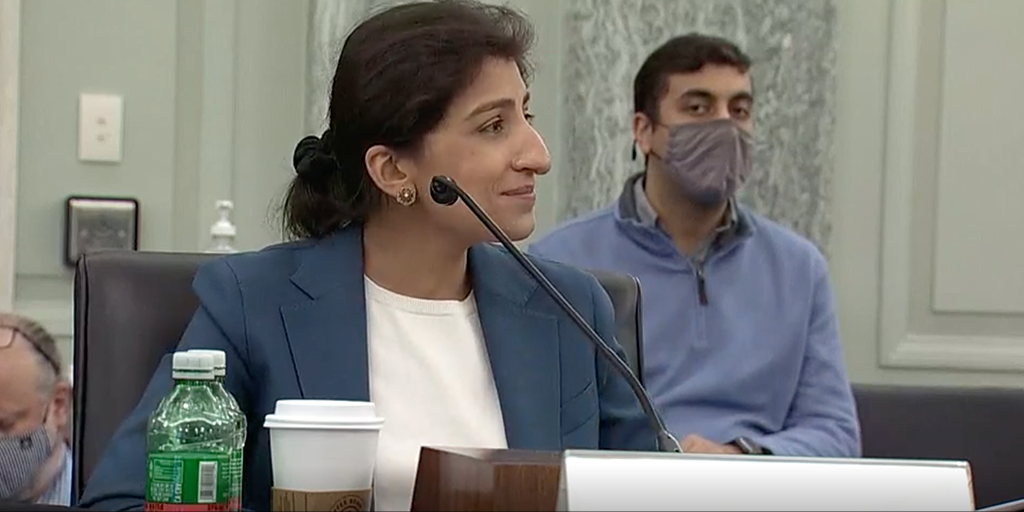

FTC Chair Lina Khan Addresses "Surveillance Economy"

Justin Hendrix / Apr 12, 2022

Lina Khan, Chair of the Federal Trade Commission (FTC) gave a keynote address at the International Association of Privacy Professionals (IAPP) conference in Washington, DC on Monday. Noting that "greater adoption of workplace surveillance technologies and facial recognition tools is expanding data collection in newly invasive and potentially discriminatory ways," Khan said that the "central role the digital tools will only continue to play invites us to consider whether we want to live in a society where firms can condition access to critical technologies and opportunities on users having to surrender to commercial surveillance."

Khan said when it comes to the FTC's enforcement priorities, "we're seeking to harness our scarce resources to maximize impact, particularly by focusing on firms whose business practices cause widespread harm. This means tackling conduct by dominant firms as well as intermediaries that may facilitate unlawful conduct on a massive scale."

She said the FTC has a "growing reliance on technologists alongside the skilled lawyers, economists, and investigators who lead our enforcement work. We have already increased the number of technologists on our staff drawing from a diverse set of skill sets, including data scientists and engineers, user design experts, and AI researchers. And we plan to continue building up this team."

She also noted that she believes "we need to reassess the frameworks we presently use to assess unlawful conduct."

What follows is lightly edited transcript of Khan's remarks. Video is available here.

Hello everybody, it's great to see you all. Thanks so much to Trevor and IAPP for the invitation to speak with you all today, it's a tremendous honor to be here.

So it's a striking moment to be discussing the state of data privacy and security today with the landscape having shifted so significantly even over the last few years. The pandemic hastened the digitization of our economy and society further embedding digital technologies deeper into our lives with schools, workplaces, in all manner of life switching over to virtual formats effectively overnight. We also saw that this digital transition was not experienced equally by all Americans since many still lack access to reliable internet and affordable personal technologies. The experience of the last couple of years has both illustrated the tremendous benefits of these tools as well as the challenges and risks posed by this growing dependence.

We've seen how security vulnerabilities can have sweeping effects disrupting fuel supply for an entire segment of the country and halting meat processing operations nationwide. We've also seen how privacy breaches can be materially consequential with violations exposing millions of children during the course of doing their schoolwork or resulting in the purchase and sale of individuals' sensitive health data. Meanwhile, greater adoption of workplace surveillance technologies and facial recognition tools is expanding data collection in newly invasive and potentially discriminatory ways.

Americans are aware of the stakes and the potential hazards. One survey showed that close to two-thirds of Americans believe that it is no longer possible to go through daily life without companies collecting data about them while over 80% feel that they have meager control over the data collected on them and believe that the risks of data collection by commercial entities outweigh the benefits. Against this backdrop, the Federal Trade Commission is charged with ensuring that our legal tools and our approach to law enforcement keep pace with market developments and business practices. With its longstanding expertise in how companies collect and deploy Americans' data, along with its unique combination of enforcement, policy, and research tools, the FTC is especially well suited to this task.

In my remarks today, I will offer a few observations about the new political economy of how Americans data is tracked, gathered, and used; identify a few ways that the Federal Trade Commission is refining its approach in light of these new market realities; and lastly, share some broader questions that I believe these realities raise for the current frameworks we use for policing the use and abuse of individuals' data.

Concerns about America's privacy has long preceded the digital age. Louis Brandeis and Samuel Warren, in 1890, famously sought to define anew the exact nature and extent of privacy protections guaranteed by law in the face of 'recent inventions and business methods'.

At bottom, they explained the law protects people from the unwanted prying eyes of private actors almost in the same way that it protects against physical injury. Similarly, lawmakers in 1970 passed the Fair Credit Reporting Act, the first federal law to govern how private businesses could use Americans' personal information. The law prescribed the types of information that credit reporting agencies could use and guaranteed a person's right to see what was in their file, a recognition of the unique harms that can result when firms have unchecked power to create dossiers on people that can be used to then grant or deny them opportunities.

Though these basic principles governing what types of personal information businesses can and cannot collect and use extend back decades, the context in which we must now apply them today looks dramatically different. Digital technologies have enabled firms to collect data on individuals at a hyper granular level tracking not just what a person purchased, for example, but also their keystroke usage, how long their mouse hovered over any particular item, and the full set of items that they viewed but did not buy. As people rely on digital tools to carry out a greater portion of daily tasks, the scope of information collected also becomes increasingly vast, ranging from one's precise location and full web browsing history to one's health records and complete network of family and friends.

The availability of powerful cloud storage services and automated decision making systems meanwhile have allowed companies to combine data across domains and retain and analyze it in aggregated form at an unprecedented scale yielding stunningly detailed and comprehensive user profiles that can be used to target individuals with striking precision. Some firms-- like weather forecasting or mapping apps, for example-- may primarily use this personal data to customize service for individual users. Others can also market or sell this data to third party brokers and other businesses in ancillary or secondary markets that most users may not even know exist. Indeed, the general lack of legal limits on what types of information could be monetized has yielded a booming economy built around the buying and selling of this data.

Business let firms provide services for $0 while monetizing personal information, a business model that seems to incentivize endless tracking and vacuuming up of users data. Indeed, the value that data brokers, advertisers, and others extract from this data has led firms to create an elaborate web of tools to surveil users across apps, websites, and devices. As one scholar has noted, today's digital economy represents probably the most highly surveilled environment in the history of humanity. While these data practices can enable services in forms of personalization that could in some instances be benefiting users, they can also enable business practices that harm Americans in a host of ways. For example, we've seen that firms can target scams and deceptive ads to consumers who are most susceptible to being lured by them. They can direct ads in key sectors like health, credit, housing in the workplace based on consumers' race, gender, age engaging in unlawful discrimination.

Collecting and sharing data on people's physical movements and online activity meanwhile can endanger them enabling stalkers to track them in real time. And failing to keep sensitive personal information secure can also expose users to hackers, identity thieves, and cyber threats. The incentive to maximally collect and retain user data can also concentrate valuable data in ways that create systemic risk increasing the hazards and costs of hacks and cyber attacks. Some, moreover, have also questioned whether the opacity and complexity of digital ad markets could be enabling widespread fraud and masking a major bubble.

Beyond these specific harms, the data practices of today's surveillance economy can create and exacerbate deep asymmetries of information exacerbating in turn imbalances of power. As numerous scholars have noted, businesses access to and control over vast troves of granular data on individuals can give these firms enormous power to predict, influence, and control human behavior. In other words, what's at stake with these business practices is not just one subjective preference for privacy over the long term but one's freedom, dignity, and equal participation in our economy and our society. Our talented FTC teams are focused on adapting the commission's existing authority to address and rectify unlawful data practices.

A few key aspects of this approach are particularly worth noting. First, we're seeking to harness our scarce resources to maximize impact, particularly by focusing on firms whose business practices cause widespread harm. This means tackling conduct by dominant firms as well as intermediaries that may facilitate unlawful conduct on a massive scale. For example, last year the commission took action against OpenX, an ad exchange that handles billions of advertising requests involving consumer data. And the company was alleged to have unlawfully collected information from services directed at children. We intend to hold accountable dominant middle men for consumer harms that they facilitate through unlawful data practices.

Second, we are taking an interdisciplinary approach assessing data practices through both the consumer protection and competition lens. Given the intersecting ways in which wide scale data collection and commercial surveillance practices can facilitate violations of both consumer protection and antitrust laws, we are keen to marshal our expertise in both areas to ensure that we are grasping the full implications of particular business conduct and strategies. Also key to our interdisciplinary approach is our growing reliance on technologists alongside the skilled lawyers, economists, and investigators who lead our enforcement work. We have already increased the number of technologists on our staff drawing from a diverse set of skill sets, including data scientists and engineers, user design experts, and AI researchers. And we plan to continue building up this team.

Third, when we encounter law violations, we are focused on designing effective remedies that are directly informed by the business incentives that various markets favor and reward. This includes pursuing remedies that fully cure the underlying harm and where necessary deprive law breakers of the fruits of their misconduct. For example, the commission recently took action against Weight Watchers' subsidiary Kurbo alleging that the company had illegally harvested children's sensitive personal information, including their names, eating habits, daily activities, birthdate, and persistent identifiers. Our settlement required not only that the business pay a penalty for its law breaking but also that it delete its ill-gotten gain and destroy any algorithms derived from that data.

Where appropriate, our remedies will also seek to foreground executive accountability through prophylactic limits on executives' conduct. In our action against Spy Phone, for example, the FTC banned both the company and its CEO from the surveillance business resolving allegations that they had been secretly harvesting and selling real time access to data on a range of sensitive activity. Lastly, we are focused on ensuring that our remedies evolve to reflect the latest best practices in security and privacy. In our recent action against CafePress, for example, our settlement remedied an alleged breach by requiring the use of multifactor authentication reflecting the latest thinking in secure credentialing. Even without a federal privacy or security law, the FTC has for decades served as a de facto enforcer in this domain using section five of the FTC Act and other statutory authorities to crack down on unlawful practices.

No doubt we will continue using our current enforcement tools to take swift and bold action. The realities of how firms surveil, categorize, and monetize user data in the modern economy, however, invites us to consider how we might need to update our approach further yet. First, the Commission is considering initiating a rule making to address commercial surveillance and lax data security practices. Given that our economy will only continue to further digitize, market-wide rules could help provide clear notice and render enforcement more impactful and efficient. Second, I believe we need to reassess the frameworks we presently use to assess unlawful conduct. Specifically, I am concerned that the present market realities may render the notice and consent paradigm outdated and insufficient. Many have noted the ways that this framework seems to fall short given both the overwhelming nature of privacy policies and the fact that they may be very well be beside the point.

When faced with technologies that are increasingly critical for navigating modern life, users often lack a real set of alternatives and cannot reasonably forego using these tools. Going forward, I believe we should approach data privacy and security protections by considering substantive limits rather than just procedural protections, which tend to create process requirements while sidestepping more fundamental questions about whether certain types of data collection and processing should be permitted in the first place. The central role the digital tools will only continue to play invites us to consider whether we want to live in a society where firms can condition access to critical technologies and opportunities on users having to surrender to commercial surveillance. Privacy legislation from Congress could also help usher in this type of new paradigm. Thank you again for inviting me to speak with you all today. This is an incredibly exciting and momentous time for these issues with a lot at stake and a tremendous amount of work to be done. And I'm looking forward to working with you all as we chart forward the path ahead. Thank you.

Authors