Bots in Congress: The Risks and Benefits of Emerging AI Tools in the Legislative Branch

Daniel Schuman, Marci Harris, Zach Graves / Feb 8, 2023Zach Graves is executive director at Lincoln Network and a fellow at the National Security Institute at George Mason University. Daniel Schuman is policy director at Demand Progress. Marci Harris is Executive Director of POPVOX Foundation, an adjunct professor at the University of San Francisco and a lecturer at San Jose State University.

In the last year, we’ve seen huge improvements in the quality and range of generative AI tools—including voice-to-text applications like OpenAI’s Whisper, text-to-voice generators like Murf, text-to-image models like Midjourney and Stable Diffusion, language models like OpenAI’s ChatGPT and GPT-3, and others. Unlike the clunky AI tools of the past (sorry, Clippy), this suite of technologies is increasingly able to replicate and outpace work done by humans.

Already, legislators are experimenting with these tools to augment their work. In January, Rep. Ted Lieu (D-CA.) penned an op-ed in the New York Times with the help of an AI, and subsequently introduced an AI-drafted bill. Around the same time, Rep. Jake Auchincloss (D-MA) read an AI generated speech on the House floor. While these examples may feel gimmicky and attention-seeking, AI tools will soon bring real disruptive innovation to legislatures—entailing substantial benefits as well as risks.

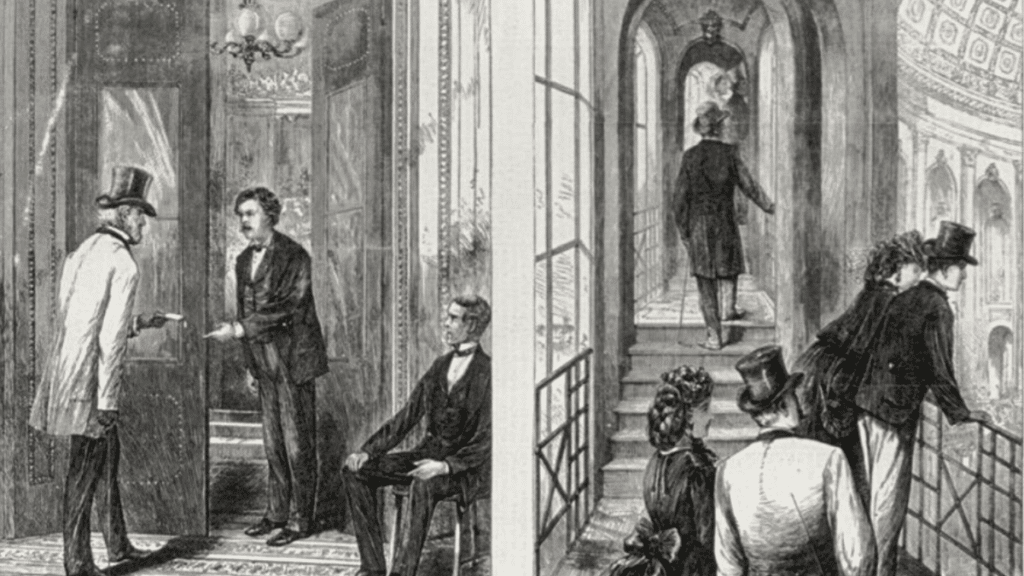

In the past, new technologies have repeatedly transformed the institution of Congress—and the American people’s relationship with it. Many of these transformative technologies were communications tools: the telegraph, the telephone, broadcast television, email, and social media. But other innovations had just as significant an effect. New modes of transportation like trains and air travel changed how much time members spent in their districts, and air conditioning allowed for more work to be done during DC’s hot summer months.

On net, new technologies have facilitated increased productivity, greater transparency, and more ways for direct democratic engagement. But they have also created new challenges, in the form of new expectations, perverse incentives, and disruptions. Generative AI tools will be no different. Congress must take a deliberate approach to test and learn how these technologies can be applied, and to set boundaries that acknowledge their limitations.

AI for Congress

As impressive as these new tools are, they won’t fix the myriad causes of congressional dysfunction—and they may make some problems worse. But there are some big problems that they could help solve, like Congress’s lack of a sufficient communications capacity.

In their daily operations, congressional offices must contend with a tsunami of information, including mass emails from advocacy groups, correspondence from donors, tweets from constituents, letters from staff and colleagues, dense policy white papers, and various other communications. As a paper from the Harvard Belfer Center observed, Congress suffers from “a failure of absorptive capacity: the ability of an organization to recognize the value of new, external information, to assimilate it, and to apply it to desired ends.”

New AI tools are particularly well suited to alleviate Congress’s strained capacity to absorb and process information. They promise to automate the drudgery of routine operations, helping separate noise from signal, and freeing their human counterparts to focus on high-value work. Specifically, AI could help improve the speed and efficiency of a range of office tasks, including:

- Drafting routine communications such as op-eds, press releases, social media posts, speeches, dear colleague letters, oversight letters, casework letters, constituent letters, witness questions, and similar materials.

- Summarizing incoming information such as government documents, hearing transcripts, bulk emails, and interest group communications.

- Leveraging legislative data from Congress.gov and a half-dozen other sources for strategic insights. This could include analysis of potential co-sponsors, insights about past Congresses, or assessments for how likely a bill is to move.

One place where generative AI is less likely to be useful in the near term is drafting legislation. This is because the legislative drafting process requires highly-specific expertise, attention to detail, and contextual decision making—criteria that current large language models (LLMs) are not well suited for.

While current AI models may be helpful for basic communications work, they should not be relied upon to provide policy expertise. As OpenAI notes in the product documentation for ChatGPT, people should expect it to sometimes give “plausible-sounding but incorrect or nonsensical answers.” This is unacceptable in a legislative context, as even small word differences (e.g., “shall” vs. “may”) carry significant weight.

While AI accuracy is improving at an astounding rate, this is unlikely to extend to niche domains requiring expensive and specialized training, at least in the short-term.

In theory, Congress could create its own co-pilot-like AI tool to assist with legislative drafting, leveraging open source repositories, the Congressional Record, and other legislative information, reinforced over time with human feedback. Such a tool could provide value in time-sensitive tasks like drafting amendments, empowering congressional offices that might otherwise have to wait in line for the Office of Legislative Counsel to process their requests.

But there are good reasons to be wary of this idea. Building cutting-edge AI tools is far from the core competency of Congress or its limited vendor ecosystem. It would be resource intensive in terms of funding and staff time, and there’s no guarantee it would ever achieve an acceptable error rate. At least for now, there are many other areas where investment is likely to have higher rewards and lower risks.

When it comes to AI, the clear opportunity for Congress is to use AI to help free up staffing hours from communications and lower-level office tasks, in a similar way to the productivity boost achieved from typewriters and computers. But in contrast to word processors, Wikipedia, or Google Search, AI tools will require disciplined human oversight and adherence to best practices.

These productivity benefits could greatly strengthen the institution of Congress at a time when it is struggling with severe resource constraints. This follows decades of diminishing capacity for oversight, legislation, and absorption. Several factors are responsible for this trend, including:

- Legislative branch funding constraints, following the “cut Congress first” politics of the mid-1990s, as well as the incentive structure of its appropriations subcommittee.

- Resources needed to respond to the steadily increasing volume of incoming communications—including email, social media, phone calls, and other media.

- The increasing demands of constituent engagement with growing district sizes (consider that while the size of the House has been fixed at 435 voting members since 1913, the population tripled in that time).

- Growing resource demands of security and facilities functions such as the Architect of the Capitol and the Capitol Police.

While the legislative branch saw a nearly $1 billion increase in fiscal year 2023, this is likely to be undone following a reported deal with conservative factions in the House to return to pre-2022 discretionary spending levels.

Moving forward, as Congress considers how to realize the benefits of internal use of AI tools, key stakeholders will need to proactively organize and get ahead of the issue. This should entail:

- Issue RFPs and coordinate with vendors—such as providers of Constituent Relationship Management platforms—to integrate with LLMs and other generative AI tools, and adapt them to congressional use cases.

- Establish a working group of key stakeholders in each chamber to set best practices for AI tools. In the House, this should be led by the Committee on House Administration and the Chief Administrative Officer. It should also coordinate with the Congressional Data Task Force.

- Conduct interviews and hold hearings with AI experts to better understand how AI tools should be used in Congress. This should include engagement with leading firms like OpenAI, Anthropic, Microsoft, Google, and others.

- Commission congressionally-chartered entities such as the National Academy of Sciences and the National Academy of Public Administration to conduct parallel studies on legislative branch use of AI tools.

Rise of the (lobbying) machines

In addition to internal use of productivity-enhancing AI tools, we can expect a wide range of outside interest groups to use AI to influence Congress. Because Congress is decentralized, and structurally closer to democratic pressures and incentives, these threats could be more difficult to respond to than their equivalents in the executive branch (e.g., regulatory comments). Similar to its past work addressing the influx of bulk communications, Congress will soon have to develop strategies to address the ways in which it processes these new forms of engagement.

Congress functions as a deliberative forum where interest groups and factions can engage in the democratic process. In practice, this means that Congress receives a massive volume of advocacy communications, including emails, phone calls, meeting requests, and yes, even faxes.

These communications originate from a wide range of sources, including average citizens, organized grassroots groups, trade associations, corporate lobbyists, and government agencies. But there is also a darker spectrum of influence operations, such as pay-for-play think tanks, astroturf groups, and foreign influence campaigns.

AI tools could make the latter easier to implement, and harder to detect. Imagine, for instance, a hostile nation state actor using public data to identify campaign major donors, cloning their voice and speech patterns from YouTube, and then using a text-to-speech chatbot to impersonate them with calls key Senators ahead of a major floor vote. Similarly, a campaign of unique, human-sounding, spoofed constituents could give the impression of grassroots support or opposition to a bill.

Some in civil society are already raising the alarm. A recent op-ed by Bruce Schneier and Nathan Sanders in the New York Times warned that AI-enhanced lobbying could hijack our democracy. They write, “while it’s impossible to predict what a future filled with A.I. lobbyists will look like, it will probably make the already influential and powerful even more so.”

Critical to the ultimate outcome is how AI tools will be deployed and controlled. Specifically, whether they are free and open source, or captured by governments and large firms, locked behind walled gardens and proprietary APIs. As Schneier and Sanders write, there’s a world where democratized AI could help give “lobbying power to the powerless.” In other words, if we democratize AI it can help strengthen our democracy; opening up access to influence, and lowering barriers to the organization of effective interest groups.

Furthermore, massive increases in AI-fueled advocacy that met with AI-assisted responses from congressional offices and agencies might ultimately prompt a healthy rethinking of the actual goals of these practices. In the old days, congressional staff discussed the importance of mail as an indicator of constituent sentiment as something measured “by the word and by the pound.” But today, there are better ways for a Member of Congress to connect with and understand the opinions and experiences of constituents. ChatGPT may be the accelerant that brings the demise of the old system.

Already the new GOP majority in the House plans to focus on field hearings and efforts to “bring Congress to the People.” If new tools lead to an advocacy arms race, then we may actually see a return to more analog, “human” forms of engagement—like getting real people in a room together.

Authors